AWS-Certified-DevOps-Engineer-Professional Exam Questions - Online Test

AWS-Certified-DevOps-Engineer-Professional Premium VCE File

150 Lectures, 20 Hours

It is impossible to pass Amazon AWS-Certified-DevOps-Engineer-Professional exam without any help in the short term. Come to Certleader soon and find the most advanced, correct and guaranteed Amazon AWS-Certified-DevOps-Engineer-Professional practice questions. You will get a surprising result by our Updated Amazon AWS Certified DevOps Engineer Professional practice guides.

Also have AWS-Certified-DevOps-Engineer-Professional free dumps questions for you:

NEW QUESTION 1

A company has an AWS CodePipeline pipeline that is configured with an Amazon S3 bucket in the eu-west-1 Region. The pipeline deploys an AWS Lambda application to the same Region. The pipeline consists of an AWS CodeBuild project build action and an AWS CloudFormation deploy action.

The CodeBuild project uses the aws cloudformation package AWS CLI command to build an artifact that contains the Lambda function code’s .zip file and the CloudFormation template. The CloudFormation deploy action references the CloudFormation template from the output artifact of the CodeBuild project’s build action.

The company wants to also deploy the Lambda application to the us-east-1 Region by using the pipeline in eu-west-1. A DevOps engineer has already updated the CodeBuild project to use the aws cloudformation package command to produce an additional output artifact for us-east-1.

Which combination of additional steps should the DevOps engineer take to meet these requirements? (Choose two.)

- A. Modify the CloudFormation template to include a parameter for the Lambda function code’s zip file locatio

- B. Create a new CloudFormation deploy action for us-east-1 in the pipelin

- C. Configure the new deploy action to pass in the us-east-1 artifact location as a parameter override.

- D. Create a new CloudFormation deploy action for us-east-1 in the pipelin

- E. Configure the new deploy action to use the CloudFormation template from the us-east-1 output artifact.

- F. Create an S3 bucket in us-east-1. Configure the S3 bucket policy to allow CodePipeline to have read and write access.

- G. Create an S3 bucket in us-east-1. Configure S3 Cross-Region Replication (CRR) from the S3 bucket in eu-west-1 to the S3 bucket in us-east-1.

- H. Modify the pipeline to include the S3 bucket for us-east-1 as an artifact stor

- I. Create a new CloudFormation deploy action for us-east-1 in the pipelin

- J. Configure the new deploy action to use the CloudFormation template from the us-east-1 output artifact.

Answer: AB

Explanation:

A. The CloudFormation template should be modified to include a parameter that indicates the location of the .zip file containing the Lambda function's code. This allows the CloudFormation deploy action to use the correct artifact depending on the region. This is critical because Lambda functions need to reference their code artifacts from the same region they are being deployed in. B. You would also need to create a new CloudFormation deploy action for the us-east-1 Region within the pipeline. This action should be configured to use the CloudFormation template from the artifact that was specifically created for us- east-1.

NEW QUESTION 2

A large enterprise is deploying a web application on AWS. The application runs on Amazon

EC2 instances behind an Application Load Balancer. The instances run in an Auto Scaling group across multiple Availability Zones. The application stores data in an Amazon RDS for Oracle DB instance and Amazon DynamoDB. There are separate environments tor development testing and production.

What is the MOST secure and flexible way to obtain password credentials during deployment?

- A. Retrieve an access key from an AWS Systems Manager securestring parameter to access AWS service

- B. Retrieve the database credentials from a Systems Manager SecureString parameter.

- C. Launch the EC2 instances with an EC2 1AM role to access AWS services Retrieve the database credentials from AWS Secrets Manager.

- D. Retrieve an access key from an AWS Systems Manager plaintext parameter to access AWS service

- E. Retrieve the database credentials from a Systems Manager SecureString parameter.

- F. Launch the EC2 instances with an EC2 1AM role to access AWS services Store the database passwords in an encrypted config file with the application artifacts.

Answer: B

Explanation:

AWS Secrets Manager is a secrets management service that helps you protect access to your applications, services, and IT resources. This service enables you to easily rotate, manage, and retrieve database credentials, API keys, and other secrets throughout their lifecycle. Using Secrets Manager, you can secure and manage secrets used to access resources in the AWS Cloud, on third-party services, and on-premises. SSM parameter store and AWS Secret manager are both a secure option. However, Secrets manager is more flexible and has more options like password generation. Reference: https://www.1strategy.com/blog/2019/02/28/aws-parameter-store-vs-aws- secrets-manager/

NEW QUESTION 3

A company's application uses a fleet of Amazon EC2 On-Demand Instances to analyze and process data. The EC2 instances are in an Auto Scaling group. The Auto Scaling group is a target group for an Application Load Balancer (ALB). The application analyzes critical data that cannot tolerate interruption. The application also analyzes noncritical data that can withstand interruption.

The critical data analysis requires quick scalability in response to real-time application demand. The noncritical data analysis involves memory consumption. A DevOps engineer must implement a solution that reduces scale-out latency for the critical data. The solution also must process the noncritical data.

Which combination of steps will meet these requirements? (Select TWO.)

- A. For the critical data, modify the existing Auto Scaling grou

- B. Create a warm pool instance in the stopped stat

- C. Define the warm pool siz

- D. Create a new version of the launch template that has detailed monitoring enable

- E. use Spot Instances.

- F. For the critical data, modify the existing Auto Scaling grou

- G. Create a warm pool instance in the stopped stat

- H. Define the warm pool siz

- I. Create a new version of the launch template that has detailed monitoring enable

- J. Use On-Demand Instances.

- K. For the critical dat

- L. modify the existing Auto Scaling grou

- M. Create a lifecycle hook to ensure that bootstrap scripts are completed successfull

- N. Ensure that the application on the instances is ready to accept traffic before the instances are registere

- O. Create a new version of the launch template that has detailed monitoring enabled.

- P. For the noncritical data, create a second Auto Scaling group that uses a launch templat

- Q. Configure the launch template to install the unified Amazon CloudWatch agent and to configure the CloudWatch agent with a custom memory utilization metri

- R. Use Spot Instance

- S. Add the new Auto Scaling group as the target group for the AL

- T. Modify the application to use two target groups for critical data and noncritical data.

- . For the noncritical data, create a second Auto Scaling grou

- . Choose the predefined memory utilization metric type for the target tracking scaling polic

- . Use Spot Instance

- . Add the new Auto Scaling group as the target group for the AL

- . Modify the application to use two target groups for critical data and noncritical data.

Answer: BD

Explanation:

✑ For the critical data, using a warm pool1 can reduce the scale-out latency by having pre-initialized EC2 instances ready to serve the application traffic. Using On-Demand Instances can ensure that the instances are always available and not interrupted by Spot interruptions2.

✑ For the noncritical data, using a second Auto Scaling group with Spot Instances can reduce the cost and leverage the unused capacity of EC23. Using a launch template with the CloudWatch agent4 can enable the collection of memory utilization metrics, which can be used to scale the group based on the memory

demand. Adding the second group as a target group for the ALB and modifying the application to use two target groups can enable routing the traffic based on the data type.

References: 1: Warm pools for Amazon EC2 Auto Scaling 2: Amazon EC2 On-Demand Capacity Reservations 3: Amazon EC2 Spot Instances 4: Metrics collected by the CloudWatch agent

NEW QUESTION 4

A company must encrypt all AMIs that the company shares across accounts. A DevOps engineer has access to a source account where an unencrypted custom AMI has been built. The DevOps engineer also has access to a target account where an Amazon EC2 Auto Scaling group will launch EC2 instances from the AMI. The DevOps engineer must share the AMI with the target account.

The company has created an AWS Key Management Service (AWS KMS) key in the source account.

Which additional steps should the DevOps engineer perform to meet the requirements? (Choose three.)

- A. In the source account, copy the unencrypted AMI to an encrypted AM

- B. Specify the KMS key in the copy action.

- C. In the source account, copy the unencrypted AMI to an encrypted AM

- D. Specify the default Amazon Elastic Block Store (Amazon EBS) encryption key in the copy action.

- E. In the source account, create a KMS grant that delegates permissions to the Auto Scaling group service-linked role in the target account.

- F. In the source account, modify the key policy to give the target account permissions to create a gran

- G. In the target account, create a KMS grant that delegates permissions to the Auto Scaling group service-linked role.

- H. In the source account, share the unencrypted AMI with the target account.

- I. In the source account, share the encrypted AMI with the target account.

Answer: ADF

Explanation:

The Auto Scaling group service-linked role must have a specific grant in the source account in order to decrypt the encrypted AMI. This is because the service-linked role does not have permissions to assume the default IAM role in the source account. The following steps are required to meet the requirements:

✑ In the source account, copy the unencrypted AMI to an encrypted AMI. Specify the KMS key in the copy action.

✑ In the source account, create a KMS grant that delegates permissions to the Auto Scaling group service-linked role in the target account.

✑ In the source account, share the encrypted AMI with the target account.

✑ In the target account, attach the KMS grant to the Auto Scaling group service- linked role.

The first three steps are the same as the steps that I described earlier. The fourth step is required to grant the Auto Scaling group service-linked role permissions to decrypt the AMI

in the target account.

NEW QUESTION 5

A company hosts applications in its AWS account Each application logs to an individual Amazon CloudWatch log group. The company’s CloudWatch costs for ingestion are increasing

A DevOps engineer needs to Identify which applications are the source of the increased logging costs.

Which solution Will meet these requirements?

- A. Use CloudWatch metrics to create a custom expression that Identifies the CloudWatch log groups that have the most data being written to them.

- B. Use CloudWatch Logs Insights to create a set of queries for the application log groups to Identify the number of logs written for a period of time

- C. Use AWS Cost Explorer to generate a cost report that details the cost for CloudWatch usage

- D. Use AWS CloudTrail to filter for CreateLogStream events for each application

Answer: C

Explanation:

The correct answer is C.

A comprehensive and detailed explanation is:

✑ Option A is incorrect because using CloudWatch metrics to create a custom expression that identifies the CloudWatch log groups that have the most data being written to them is not a valid solution. CloudWatch metrics do not provide information about the size or volume of data being ingested by CloudWatch logs. CloudWatch metrics only provide information about the number of events, bytes, and errors that occur within a log group or stream. Moreover, creating a custom expression with CloudWatch metrics would require using the search_web tool, which is not necessary for this use case.

✑ Option B is incorrect because using CloudWatch Logs Insights to create a set of queries for the application log groups to identify the number of logs written for a period of time is not a valid solution. CloudWatch Logs Insights can help analyze and filter log events based on patterns and expressions, but it does not provide information about the cost or billing of CloudWatch logs. CloudWatch Logs Insights also charges based on the amount of data scanned by each query, which could increase the logging costs further.

✑ Option C is correct because using AWS Cost Explorer to generate a cost report that details the cost for CloudWatch usage is a valid solution. AWS Cost Explorer is a tool that helps visualize, understand, and manage AWS costs and usage over time. AWS Cost Explorer can generate custom reports that show the breakdown of costs by service, region, account, tag, or any other dimension. AWS Cost Explorer can also filter and group costs by usage type, which can help identify the specific CloudWatch log groups that are the source of the increased logging costs.

✑ Option D is incorrect because using AWS CloudTrail to filter for CreateLogStream events for each application is not a valid solution. AWS CloudTrail is a service that records API calls and account activity for AWS services, including CloudWatch logs. However, AWS CloudTrail does not provide information about the cost or billing of CloudWatch logs. Filtering for CreateLogStream events would only show when a new log stream was created within a log group, but not how much data was ingested or stored by that log stream.

References:

✑ CloudWatch Metrics

✑ CloudWatch Logs Insights

✑ AWS Cost Explorer

✑ AWS CloudTrail

NEW QUESTION 6

A company's development team uses AVMS Cloud Formation to deploy its application resources The team must use for an changes to the environment The team cannot use AWS Management Console or the AWS CLI to make manual changes directly.

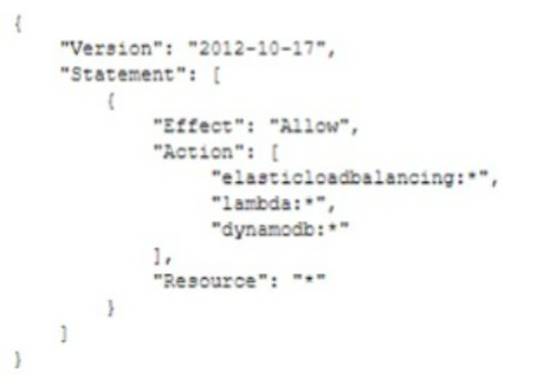

The team uses a developer IAM role to access the environment The role is configured with the Admnistratoraccess managed policy. The company has created a new Cloudformationdeployment IAM role that has the following policy.

The company wants ensure that only CloudFormation can use the new role. The development team cannot make any manual changes to the deployed resources.

Which combination of steps meet these requirements? (Select THREE.)

- A. Remove the AdministratorAccess polic

- B. Assign the ReadOnIyAccess managed IAM policy to the developer rol

- C. Instruct the developers to use the CloudFormationDeployment role as a CloudFormation service role when the developers deploy new stacks.

- D. Update the trust of CloudFormationDeployment role to allow the developer IAM role to assume the CloudFormationDepoyment role.

- E. Configure the IAM to be to get and pass the CloudFormationDeployment role if cloudformation actions for resources,

- F. Update the trust Of the CloudFormationDepoyment role to anow the cloudformation.amazonaws.com AWS principal to perform the iam:AssumeR01e action

- G. Remove me Administratoraccess polic

- H. Assign the ReadOnly/Access managed IAM policy to the developer role Instruct the developers to assume the CloudFormatondeployment role when the developers new stacks

- I. Add an IAM policy to CloudFormationDeplyment to allow cloudformation * on an Add a policy that allows the iam.PassR01e action for ARN of if iam PassedT0Service equal cloudformation.amazonaws.com

Answer: ADF

Explanation:

A comprehensive and detailed explanation is:

✑ Option A is correct because removing the AdministratorAccess policy and assigning the ReadOnlyAccess managed IAM policy to the developer role is a valid way to prevent the developers from making any manual changes to the deployed resources. The AdministratorAccess policy grants full access to all AWS resources and actions, which is not necessary for the developers. The ReadOnlyAccess policy grants read-only access to most AWS resources and actions, which is sufficient for the developers to view the status of their stacks. Instructing the developers to use the CloudFormationDeployment role as a CloudFormation service role when they deploy new stacks is also a valid way to ensure that only CloudFormation can use the new role. A CloudFormation service role is an IAM role that allows CloudFormation to make calls to resources in a stack on behalf of the user1. The user can specify a service role when they create or update a stack, and CloudFormation will use that role’s credentials for all operations that are performed on that stack1.

✑ Option B is incorrect because updating the trust of CloudFormationDeployment role to allow the developer IAM role to assume the CloudFormationDeployment role is not a valid solution. This would allow the developers to manually assume the CloudFormationDeployment role and perform actions on the deployed resources, which is not what the company wants. The trust of CloudFormationDeployment role should only allow the cloudformation.amazonaws.com AWS principal to assume the role, as in option D.

✑ Option C is incorrect because configuring the IAM user to be able to get and pass the CloudFormationDeployment role if cloudformation actions for resources is not a valid solution. This would allow the developers to manually pass the CloudFormationDeployment role to other services or resources, which is not what the company wants. The IAM user should only be able to pass the CloudFormationDeployment role as a service role when they create or update a stack with CloudFormation, as in option A.

✑ Option D is correct because updating the trust of CloudFormationDeployment role

to allow the cloudformation.amazonaws.com AWS principal to perform the iam:AssumeRole action is a valid solution. This allows CloudFormation to assume the CloudFormationDeployment role and access resources in other services on behalf of the user2. The trust policy of an IAM role defines which entities can assume the role2. By specifying cloudformation.amazonaws.com as the principal, you grant permission only to CloudFormation to assume this role.

✑ Option E is incorrect because instructing the developers to assume the

CloudFormationDeployment role when they deploy new stacks is not a valid solution. This would allow the developers to manually assume the CloudFormationDeployment role and perform actions on the deployed resources, which is not what the company wants. The developers should only use the CloudFormationDeployment role as a service role when they deploy new stacks with CloudFormation, as in option A.

✑ Option F is correct because adding an IAM policy to CloudFormationDeployment

that allows cloudformation:* on all resources and adding a policy that allows the iam:PassRole action for ARN of CloudFormationDeployment if iam:PassedToService equals cloudformation.amazonaws.com are valid solutions. The first policy grants permission for CloudFormationDeployment to perform any action with any resource using cloudformation.amazonaws.com as a service principal3. The second policy grants permission for passing this role only if it is passed by cloudformation.amazonaws.com as a service principal4. This ensures that only CloudFormation can use this role.

References:

✑ 1: AWS CloudFormation service roles

✑ 2: How to use trust policies with IAM roles

✑ 3: AWS::IAM::Policy

✑ 4: IAM: Pass an IAM role to a specific AWS service

NEW QUESTION 7

A company wants to use a grid system for a proprietary enterprise m-memory data store on top of AWS. This system can run in multiple server nodes in any Linux-based distribution. The system must be able to reconfigure the entire cluster every time a node is added or removed. When adding or removing nodes an /etc./cluster/nodes config file must be updated listing the IP addresses of the current node members of that cluster.

The company wants to automate the task of adding new nodes to a cluster. What can a DevOps engineer do to meet these requirements?

- A. Use AWS OpsWorks Stacks to layer the server nodes of that cluste

- B. Create a Chef recipe that populates the content of the 'etc./cluster/nodes config file and restarts the service by using the current members of the laye

- C. Assign that recipe to the Configure lifecycle event.

- D. Put the file nodes config in version contro

- E. Create an AWS CodeDeploy deployment configuration and deployment group based on an Amazon EC2 tag value for thecluster node

- F. When adding a new node to the cluster update the file with all tagged instances and make a commit in version contro

- G. Deploy the new file and restart the services.

- H. Create an Amazon S3 bucket and upload a version of the /etc./cluster/nodes config file Create a crontab script that will poll for that S3 file and download it frequentl

- I. Use a process manager such as Monit or system, to restart the cluster services when it detects that the new file was modifie

- J. When adding a node to the cluster edit the file's most recent members Upload the new file to the S3 bucket.

- K. Create a user data script that lists all members of the current security group of the cluster and automatically updates the /etc/cluster/. nodes confi

- L. Tile whenever a new instance is added to the cluster.

Answer: A

Explanation:

You can run custom recipes manually, but the best approach is usually to have AWS OpsWorks Stacks run them automatically. Every layer has a set of built-in recipes assigned each of five lifecycle events—Setup, Configure, Deploy, Undeploy, and Shutdown. Each time an event occurs for an instance, AWS OpsWorks Stacks runs the associated recipes for each of the instance's layers, which handle the corresponding tasks. For example, when an instance finishes booting, AWS OpsWorks Stacks triggers a Setup event. This event runs the associated layer's Setup recipes, which typically handle tasks such as installing and configuring packages

NEW QUESTION 8

A company has a single AWS account that runs hundreds of Amazon EC2 instances in a single AWS Region. New EC2 instances are launched and terminated each hour in the account. The account also includes existing EC2 instances that have been running for longer than a week.

The company's security policy requires all running EC2 instances to use an EC2 instance profile. If an EC2 instance does not have an instance profile attached, the EC2 instance must use a default instance profile that has no IAM permissions assigned.

A DevOps engineer reviews the account and discovers EC2 instances that are running without an instance profile. During the review, the DevOps engineer also observes that new EC2 instances are being launched without an instance profile.

Which solution will ensure that an instance profile is attached to all existing and future EC2 instances in the Region?

- A. Configure an Amazon EventBridge rule that reacts to EC2 RunInstances API call

- B. Configure the rule to invoke an AWS Lambda function to attach the default instance profile to the EC2 instances.

- C. Configure the ec2-instance-profile-attached AWS Config managed rule with a trigger type of configuration change

- D. Configure an automatic remediation action that invokes an AWS Systems Manager Automation runbook to attach the default instance profile to the EC2 instances.

- E. Configure an Amazon EventBridge rule that reacts to EC2 StartInstances API call

- F. Configure the rule to invoke an AWS Systems Manager Automation runbook to attach the default instance profile to the EC2 instances.

- G. Configure the iam-role-managed-policy-check AWS Config managed rule with a trigger type of configuration change

- H. Configure an automatic remediation action that invokes an AWS Lambda function to attach the default instance profile to the EC2 instances.

Answer: B

Explanation:

https://docs.aws.amazon.com/config/latest/developerguide/ec2-instance-profile-attached.html

NEW QUESTION 9

A media company has several thousand Amazon EC2 instances in an AWS account. The company is using Slack and a shared email inbox for team communications and important updates. A DevOps engineer needs to send all AWS-scheduled EC2 maintenance notifications to the Slack channel and the shared inbox. The solution must include the instances' Name and Owner tags.

Which solution will meet these requirements?

- A. Integrate AWS Trusted Advisor with AWS Config Configure a custom AWS Config rule to invoke an AWS Lambda function to publish notifications to an Amazon Simple Notification Service (Amazon SNS) topic Subscribe a Slack channel endpoint and the shared inbox to the topic.

- B. Use Amazon EventBridge to monitor for AWS Health Events Configure the maintenance events to target an Amazon Simple Notification Service (Amazon SNS) topic Subscribe an AWS Lambda function to the SNS topic to send notifications to the Slack channel and the shared inbox.

- C. Create an AWS Lambda function that sends EC2 maintenance notifications to the Slack channel and the shared inbox Monitor EC2 health events by using Amazon CloudWatch metrics Configure a CloudWatch alarm that invokes the Lambda function when a maintenance notification is received.

- D. Configure AWS Support integration with AWS CloudTrail Create a CloudTrail lookup event to invoke an AWS Lambda function to pass EC2 maintenance notifications to Amazon Simple Notification Service (Amazon SNS) Configure Amazon SNS to target the Slack channel and the shared inbox.

Answer: B

Explanation:

https://docs.aws.amazon.com/health/latest/ug/cloudwatch-events-health.html

NEW QUESTION 10

A company has containerized all of its in-house quality control applications. The company is running Jenkins on Amazon EC2 instances, which require patching and upgrading. The compliance officer has requested a DevOps engineer begin encrypting build artifacts since they contain company intellectual property.

What should the DevOps engineer do to accomplish this in the MOST maintainable manner?

- A. Automate patching and upgrading using AWS Systems Manager on EC2 instances and encrypt Amazon EBS volumes by default.

- B. Deploy Jenkins to an Amazon ECS cluster and copy build artifacts to an Amazon S3 bucket with default encryption enabled.

- C. Leverage AWS CodePipeline with a build action and encrypt the artifacts using AWS Secrets Manager.

- D. Use AWS CodeBuild with artifact encryption to replace the Jenkins instance running on EC2 instances.

Answer: D

Explanation:

The following are the steps involved in accomplishing this in the most maintainable manner:

✑ Use AWS CodeBuild with artifact encryption to replace the Jenkins instance

running on EC2 instances.

✑ Configure CodeBuild to encrypt the build artifacts using AWS Secrets Manager.

✑ Deploy the containerized quality control applications to CodeBuild.

This approach is the most maintainable because it eliminates the need to manage Jenkins on EC2 instances. CodeBuild is a managed service, so the DevOps engineer does not need to worry about patching or upgrading the service. https://docs.aws.amazon.com/codebuild/latest/userguide/security-encryption.html Build artifact encryption - CodeBuild requires access to an AWS KMS CMK in order to encrypt its build output artifacts. By default, CodeBuild uses an AWS Key Management Service CMK for Amazon S3 in your AWS account. If you do not want to use this CMK, you must create and configure a customer-managed CMK. For more information Creating keys.

NEW QUESTION 11

A company's production environment uses an AWS CodeDeploy blue/green deployment to deploy an application. The deployment incudes Amazon EC2 Auto Scaling groups that launch instances that run Amazon Linux 2.

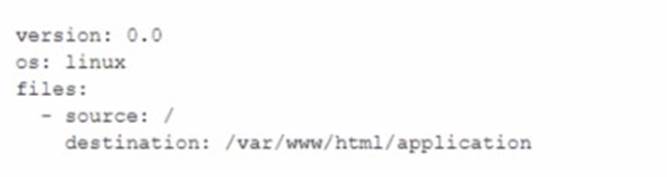

A working appspec. ymi file exists in the code repository and contains the following text.

A DevOps engineer needs to ensure that a script downloads and installs a license file onto the instances before the replacement instances start to handle request traffic. The DevOps engineer adds a hooks section to the appspec. yml file.

Which hook should the DevOps engineer use to run the script that downloads and installs the license file?

- A. AfterBlockTraffic

- B. BeforeBlockTraffic

- C. Beforelnstall

- D. Down load Bundle

Answer: C

Explanation:

This hook runs before the new application version is installed on the replacement instances. This is the best place to run the script because it ensures that the license file is downloaded and installed before the replacement instances start to handle request traffic. If you use any other hook, you may encounter errors or inconsistencies in your application.

NEW QUESTION 12

A company's developers use Amazon EC2 instances as remote workstations. The company is concerned that users can create or modify EC2 security groups to allow unrestricted inbound access.

A DevOps engineer needs to develop a solution to detect when users create unrestricted security group rules. The solution must detect changes to security group rules in near real time, remove unrestricted rules, and send email notifications to the security team. The DevOps engineer has created an AWS Lambda function that checks for security group ID from input, removes rules that grant unrestricted access, and sends notifications through Amazon Simple Notification Service (Amazon SNS).

What should the DevOps engineer do next to meet the requirements?

- A. Configure the Lambda function to be invoked by the SNS topi

- B. Create an AWS CloudTrail subscription for the SNS topi

- C. Configure a subscription filter for security group modification events.

- D. Create an Amazon EventBridge scheduled rule to invoke the Lambda functio

- E. Define a schedule pattern that runs the Lambda function every hour.

- F. Create an Amazon EventBridge event rule that has the default event bus as the sourc

- G. Define the rule’s event pattern to match EC2 security group creation and modification event

- H. Configure the rule to invoke the Lambda function.

- I. Create an Amazon EventBridge custom event bus that subscribes to events from all AWS service

- J. Configure the Lambda function to be invoked by the custom event bus.

Answer: C

Explanation:

To meet the requirements, the DevOps engineer should create an Amazon EventBridge event rule that has the default event bus as the source. The rule's event pattern should match EC2 security group creation and modification events, and it should be configured to invoke the Lambda function. This solution will allow for near real-time detection of security group rule changes and will trigger the Lambda function to remove any unrestricted rules and send email notifications to the security team. https://repost.aws/knowledge-center/monitor-security-group-changes-ec2

NEW QUESTION 13

A development team wants to use AWS CloudFormation stacks to deploy an application. However, the developer IAM role does not have the required permissions to provision the resources that are specified in the AWS CloudFormation template. A DevOps engineer needs to implement a solution that allows the developers to deploy the stacks. The solution must follow the principle of least privilege.

Which solution will meet these requirements?

- A. Create an IAM policy that allows the developers to provision the required resource

- B. Attach the policy to the developer IAM role.

- C. Create an IAM policy that allows full access to AWS CloudFormatio

- D. Attach the policy to the developer IAM role.

- E. Create an AWS CloudFormation service role that has the required permission

- F. Grant the developer IAM role a cloudformation:* actio

- G. Use the new service role during stack deployments.

- H. Create an AWS CloudFormation service role that has the required permission

- I. Grant the developer IAM role the iam:PassRole permissio

- J. Use the new service role during stack deployments.

Answer: D

Explanation:

https://docs.aws.amazon.com/AWSCloudFormation/latest/UserGuide/using-iam-servicerole.html

NEW QUESTION 14

A company wants to migrate its content sharing web application hosted on Amazon EC2 to a serverless architecture. The company currently deploys changes to its application by creating a new Auto Scaling group of EC2 instances and a new Elastic Load Balancer, and then shifting the traffic away using an Amazon Route 53 weighted routing policy.

For its new serverless application, the company is planning to use Amazon API Gateway and AWS Lambda. The company will need to update its deployment processes to work with the new application. It will also need to retain the ability to test new features on a small number of users before rolling the features out to the entire user base.

Which deployment strategy will meet these requirements?

- A. Use AWS CDK to deploy API Gateway and Lambda function

- B. When code needs to be changed, update the AWS CloudFormation stack and deploy the new version of the APIs and Lambda function

- C. Use a Route 53 failover routing policy for the canary release strategy.

- D. Use AWS CloudFormation to deploy API Gateway and Lambda functions using Lambda function version

- E. When code needs to be changed, update the CloudFormation stack with the new Lambda code and update the API versions using a canary release strateg

- F. Promote the new version when testing is complete.

- G. Use AWS Elastic Beanstalk to deploy API Gateway and Lambda function

- H. When code needs to be changed, deploy a new version of the API and Lambda function

- I. Shift traffic gradually using an Elastic Beanstalk blue/green deployment.

- J. Use AWS OpsWorks to deploy API Gateway in the service layer and Lambda functions in a custom laye

- K. When code needs to be changed, use OpsWorks to perform a blue/green deployment and shift traffic gradually.

Answer: B

Explanation:

https://docs.aws.amazon.com/serverless-application- model/latest/developerguide/automating-updates-to-serverless-apps.html

NEW QUESTION 15

A company is adopting AWS CodeDeploy to automate its application deployments for a Java-Apache Tomcat application with an Apache Webserver. The development team started with a proof of concept, created a deployment group for a developer environment, and performed functional tests within the application. After completion, the team will create additional deployment groups for staging and production.

The current log level is configured within the Apache settings, but the team wants to change this configuration dynamically when the deployment occurs, so that they can set different log level configurations depending on the deployment group without having a different application revision for each group.

How can these requirements be met with the LEAST management overhead and without requiring different script versions for each deployment group?

- A. Tag the Amazon EC2 instances depending on the deployment grou

- B. Then place ascript into the application revision that calls the metadata service and the EC2 API to identify which deployment group the instance is part o

- C. Use this information to configure the log level setting

- D. Reference the script as part of the AfterInstall lifecycle hook in the appspec.yml file.

- E. Create a script that uses the CodeDeploy environment variable DEPLOYMENT_GROUP_ NAME to identify which deployment group the instance is part o

- F. Use this information to configure the log level setting

- G. Reference this script as part of the BeforeInstall lifecycle hook in the appspec.yml file.

- H. Create a CodeDeploy custom environment variable for each environmen

- I. Then place a script into the application revision that checks this environment variable to identify which deployment group the instance is part o

- J. Use this information to configure the log level setting

- K. Reference this script as part of the ValidateService lifecycle hook in the appspec.yml file.

- L. Create a script that uses the CodeDeploy environment variable DEPLOYMENT_GROUP_ID to identify which deployment group the instance is part of to configure the log level setting

- M. Reference this script as part of the Install lifecycle hook in the appspec.yml file.

Answer: B

Explanation:

The following are the steps that the company can take to change the log level dynamically when the deployment occurs:

✑ Create a script that uses the CodeDeploy environment variable DEPLOYMENT_GROUP_NAME to identify which deployment group the instance is part of.

✑ Use this information to configure the log level settings.

✑ Reference this script as part of the BeforeInstall lifecycle hook in the appspec.yml file.

The DEPLOYMENT_GROUP_NAME environment variable is automatically set by CodeDeploy when the deployment is triggered. This means that the script does not need to call the metadata service or the EC2 API to identify the deployment group.

This solution is the least complex and requires the least management overhead. It also does not require different script versions for each deployment group.

The following are the reasons why the other options are not correct:

✑ Option A is incorrect because it would require tagging the Amazon EC2 instances, which would be a manual and time-consuming process.

✑ Option C is incorrect because it would require creating a custom environment variable for each environment. This would be a complex and error-prone process.

✑ Option D is incorrect because it would use

the DEPLOYMENT_GROUP_ID environment variable. However, this variable is not automatically set by CodeDeploy, so the script would need to call the metadata service or the EC2 API to get the deployment group ID. This would add complexity and overhead to the solution.

NEW QUESTION 16

A company has multiple accounts in an organization in AWS Organizations. The company's SecOps team needs to receive an Amazon Simple Notification Service (Amazon SNS) notification if any account in the organization turns off the Block Public Access feature on an Amazon S3 bucket. A DevOps engineer must implement this change without affecting the operation of any AWS accounts. The implementation must ensure that individual member accounts in the organization cannot turn off the notification.

Which solution will meet these requirements?

- A. Designate an account to be the delegated Amazon GuardDuty administrator accoun

- B. Turn on GuardDuty for all accounts across the organizatio

- C. In the GuardDuty administrator account, create an SNS topi

- D. Subscribe the SecOps team's email address to the SNS topi

- E. In the same account, create an Amazon EventBridge rule that uses an event pattern for GuardDuty findings and a target of the SNS topic.

- F. Create an AWS CloudFormation template that creates an SNS topic and subscribes the SecOps team’s email address to the SNS topi

- G. In the template, include an Amazon EventBridge rule that uses an event pattern of CloudTrail activity for s3:PutBucketPublicAccessBlock and a target of the SNS topi

- H. Deploy the stack to every account in the organization by using CloudFormation StackSets.

- I. Turn on AWS Config across the organizatio

- J. In the delegated administrator account, create an SNS topi

- K. Subscribe the SecOps team's email address to the SNS topi

- L. Deploy a conformance pack that uses the s3-bucket-level-public-access-prohibited AWS Config managed rule in each account and uses an AWS Systems Manager document to publish an event to the SNS topic to notify the SecOps team.

- M. Turn on Amazon Inspector across the organizatio

- N. In the Amazon Inspector delegated administrator account, create an SNS topi

- O. Subscribe the SecOps team’s email address to the SNS topi

- P. In the same account, create an Amazon EventBridge rule that uses an event pattern for public network exposure of the S3 bucket and publishes an event to the SNS topic to notify the SecOps team.

Answer: C

Explanation:

Amazon GuardDuty is primarily on threat detection and response, not configuration monitoring A conformance pack is a collection of AWS Config rules and remediation actions that can be easily deployed as a single entity in an account and a Region or across an organization in AWS Organizations. https://docs.aws.amazon.com/config/latest/developerguide/conformance-packs.html https://docs.aws.amazon.com/config/latest/developerguide/s3-account-level-public-access- blocks.html

NEW QUESTION 17

A company runs an application with an Amazon EC2 and on-premises configuration. A DevOps engineer needs to standardize patching across both environments. Company policy dictates that patching only happens during non-business hours.

Which combination of actions will meet these requirements? (Choose three.)

- A. Add the physical machines into AWS Systems Manager using Systems Manager Hybrid Activations.

- B. Attach an IAM role to the EC2 instances, allowing them to be managed by AWS Systems Manager.

- C. Create IAM access keys for the on-premises machines to interact with AWS Systems Manager.

- D. Run an AWS Systems Manager Automation document to patch the systems every hour.

- E. Use Amazon EventBridge scheduled events to schedule a patch window.

- F. Use AWS Systems Manager Maintenance Windows to schedule a patch window.

Answer: ABF

Explanation:

https://docs.aws.amazon.com/systems-manager/latest/userguide/sysman-managed-instance-activation.html

NEW QUESTION 18

A company hosts a security auditing application in an AWS account. The auditing application uses an IAM role to access other AWS accounts. All the accounts are in the same organization in AWS Organizations.

A recent security audit revealed that users in the audited AWS accounts could modify or delete the auditing application's IAM role. The company needs to prevent any modification to the auditing application's IAM role by any entity other than a trusted administrator IAM role.

Which solution will meet these requirements?

- A. Create an SCP that includes a Deny statement for changes to the auditing application's IAM rol

- B. Include a condition that allows the trusted administrator IAM role to make change

- C. Attach the SCP to the root of the organization.

- D. Create an SCP that includes an Allow statement for changes to the auditing application's IAM role by the trusted administrator IAM rol

- E. Include a Deny statement for changes by all other IAM principal

- F. Attach the SCP to the IAM service in each AWS account where the auditing application has an IAM role.

- G. Create an IAM permissions boundary that includes a Deny statement for changes to the auditing application's IAM rol

- H. Include a condition that allows the trusted administrator IAM role to make change

- I. Attach the permissions boundary to the audited AWS accounts.

- J. Create an IAM permissions boundary that includes a Deny statement for changes to the auditing application’s IAM rol

- K. Include a condition that allows the trusted administrator IAM role to make change

- L. Attach the permissions boundary to the auditing application's IAM role in the AWS accounts.

Answer: A

Explanation:

https://docs.aws.amazon.com/organizations/latest/userguide/orgs_manage_policies_scps. html?icmpid=docs_orgs_console

SCPs (Service Control Policies) are the best way to restrict permissions at the organizational level, which in this case would be used to restrict modifications to the IAM role used by the auditing application, while still allowing trusted administrators to make changes to it. Options C and D are not as effective because IAM permission boundaries are applied to IAM entities (users, groups, and roles), not the account itself, and must be applied to all IAM entities in the account.

NEW QUESTION 19

A company has developed a serverless web application that is hosted on AWS. The application consists of Amazon S3. Amazon API Gateway, several AWS Lambda functions, and an Amazon RDS for MySQL database. The company is using AWS CodeCommit to store the source code. The source code is a combination of AWS Serverless Application Model (AWS SAM) templates and Python code.

A security audit and penetration test reveal that user names and passwords for authentication to the database are hardcoded within CodeCommit repositories. A DevOps engineer must implement a solution to automatically detect and prevent hardcoded secrets.

What is the MOST secure solution that meets these requirements?

- A. Enable Amazon CodeGuru Profile

- B. Decorate the handler function with@with_lambda_profiler(). Manually review the recommendation repor

- C. Write the secret to AWS Systems Manager Parameter Store as a secure strin

- D. Update the SAM templates and the Python code to pull the secret from Parameter Store.

- E. Associate the CodeCommit repository with Amazon CodeGuru Reviewe

- F. Manually check the code review for any recommendation

- G. Choose the option to protect the secre

- H. Update the SAM templates and the Python code to pull the secret from AWS Secrets Manager.

- I. Enable Amazon CodeGuru Profile

- J. Decorate the handler function with@with_lambda_profiler(). Manually review the recommendation repor

- K. Choose the option to protect the secre

- L. Update the SAM templates and the Python code to pull the secret from AWS Secrets Manager.

- M. Associate the CodeCommit repository with Amazon CodeGuru Reviewe

- N. Manually check the code review for any recommendation

- O. Write the secret to AWS Systems Manager Parameter Store as a strin

- P. Update the SAM templates and the Python code to pull the secret from Parameter Store.

Answer: B

Explanation:

https://docs.aws.amazon.com/codecommit/latest/userguide/how-to-amazon-codeguru-reviewer.html

NEW QUESTION 20

The security team depends on AWS CloudTrail to detect sensitive security issues in the company's AWS account. The DevOps engineer needs a solution to auto-remediate CloudTrail being turned off in an AWS account.

What solution ensures the LEAST amount of downtime for the CloudTrail log deliveries?

- A. Create an Amazon EventBridge rule for the CloudTrail StopLogging even

- B. Create an AWS Lambda (unction that uses the AWS SDK to call StartLogging on the ARN of the resource in which StopLogging was calle

- C. Add the Lambda function ARN as a target to the EventBridge rule.

- D. Deploy the AWS-managed CloudTrail-enabled AWS Config rule set with a periodic interval to 1 hou

- E. Create an Amazon EventBridge rule tor AWS Config rules compliance chang

- F. Create an AWS Lambda function that uses the AWS SDK to call StartLogging on the ARN of the resource in which StopLoggmg was calle

- G. Add the Lambda function ARN as a target to the EventBridge rule.

- H. Create an Amazon EventBridge rule for a scheduled event every 5 minute

- I. Create an AWS Lambda function that uses the AWS SDK to call StartLogging on a CloudTrail trail in the AWS accoun

- J. Add the Lambda function ARN as a target to the EventBridge rule.

- K. Launch a t2 nano instance with a script running every 5 minutes that uses the AWS SDK to query CloudTrail in the current accoun

- L. If the CloudTrail trail is disabled have the script re-enable the trail.

Answer: A

Explanation:

https://aws.amazon.com/blogs/mt/monitor-changes-and-auto-enable-logging-in-aws-cloudtrail/

NEW QUESTION 21

......

100% Valid and Newest Version AWS-Certified-DevOps-Engineer-Professional Questions & Answers shared by 2passeasy, Get Full Dumps HERE: https://www.2passeasy.com/dumps/AWS-Certified-DevOps-Engineer-Professional/ (New 250 Q&As)

- A Review Of Accurate AWS-Certified-Cloud-Practitioner Exams

- [2021-New] Amazon AWS-Certified-DevOps-Engineer-Professional Dumps With Update Exam Questions (1-10)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (41-50)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (11-20)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (271-280)

- [2021-New] Amazon AWS-Certified-DevOps-Engineer-Professional Dumps With Update Exam Questions (21-30)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (21-30)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (251-260)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (1-10)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (101-110)