AWS-Certified-Machine-Learning-Specialty Exam Questions - Online Test

AWS-Certified-Machine-Learning-Specialty Premium VCE File

150 Lectures, 20 Hours

It is impossible to pass Amazon AWS-Certified-Machine-Learning-Specialty exam without any help in the short term. Come to Actualtests soon and find the most advanced, correct and guaranteed Amazon AWS-Certified-Machine-Learning-Specialty practice questions. You will get a surprising result by our Leading AWS Certified Machine Learning - Specialty practice guides.

Amazon AWS-Certified-Machine-Learning-Specialty Free Dumps Questions Online, Read and Test Now.

NEW QUESTION 1

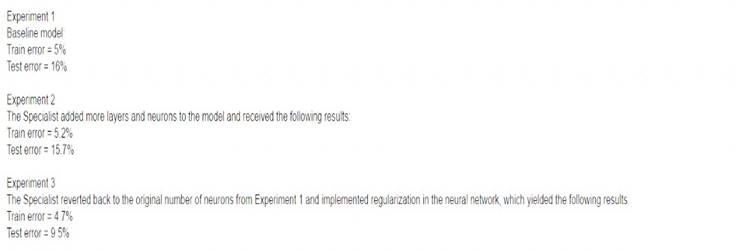

An Machine Learning Specialist discover the following statistics while experimenting on a model.

What can the Specialist from the experiments?

- A. The model In Experiment 1 had a high variance error lhat was reduced in Experiment 3 by regularization Experiment 2 shows that there is minimal bias error in Experiment 1

- B. The model in Experiment 1 had a high bias error that was reduced in Experiment 3 by regularization Experiment 2 shows that there is minimal variance error in Experiment 1

- C. The model in Experiment 1 had a high bias error and a high variance error that were reduced in Experiment 3 by regularization Experiment 2 shows thai high bias cannot be reduced by increasing layers and neurons in the model

- D. The model in Experiment 1 had a high random noise error that was reduced in Expenment 3 by regularization Expenment 2 shows that random noise cannot be reduced by increasing layers and neurons in the model

Answer: C

NEW QUESTION 2

A data scientist needs to identify fraudulent user accounts for a company's ecommerce platform. The company wants the ability to determine if a newly created account is associated with a previously known fraudulent user. The data scientist is using AWS Glue to cleanse the company's application logs during ingestion.

Which strategy will allow the data scientist to identify fraudulent accounts?

- A. Execute the built-in FindDuplicates Amazon Athena query.

- B. Create a FindMatches machine learning transform in AWS Glue.

- C. Create an AWS Glue crawler to infer duplicate accounts in the source data.

- D. Search for duplicate accounts in the AWS Glue Data Catalog.

Answer: B

NEW QUESTION 3

A Machine Learning Specialist is training a model to identify the make and model of vehicles in images The Specialist wants to use transfer learning and an existing model trained on images of general objects The Specialist collated a large custom dataset of pictures containing different vehicle makes and models

- A. Initialize the model with random weights in all layers including the last fully connected layer

- B. Initialize the model with pre-trained weights in all layers and replace the last fully connected layer.

- C. Initialize the model with random weights in all layers and replace the last fully connected layer

- D. Initialize the model with pre-trained weights in all layers including the last fully connected layer

Answer: D

NEW QUESTION 4

A data scientist is developing a pipeline to ingest streaming web traffic data. The data scientist needs to implement a process to identify unusual web traffic patterns as part of the pipeline. The patterns will be used downstream for alerting and incident response. The data scientist has access to unlabeled historic data to use, if needed.

The solution needs to do the following: Calculate an anomaly score for each web traffic entry.

Calculate an anomaly score for each web traffic entry. Adapt unusual event identification to changing web patterns over time. Which approach should the data scientist implement to meet these requirements?

Adapt unusual event identification to changing web patterns over time. Which approach should the data scientist implement to meet these requirements?

- A. Use historic web traffic data to train an anomaly detection model using the Amazon SageMaker Random Cut Forest (RCF) built-in mode

- B. Use an Amazon Kinesis Data Stream to process the incoming webtrafficdat

- C. Attach a preprocessing AWS Lambda function to perform data enrichment by calling the RCF modelto calculate the anomaly score for each record.

- D. Use historic web traffic data to train an anomaly detection model using the Amazon SageMaker built-inXGBoost mode

- E. Use an Amazon Kinesis Data Stream to process the incoming web traffic dat

- F. Attach apreprocessing AWS Lambda function to perform data enrichment by calling the XGBoost model to calculate the anomaly score for each record.

- G. Collect the streaming data using Amazon Kinesis Data Firehos

- H. Map the delivery stream as an inputsource for Amazon Kinesis Data Analytic

- I. Write a SQL query to run in real time against the streaming datawith the k-Nearest Neighbors (kNN) SQL extension to calculate anomaly scores for each record using a tumbling window.

- J. Collect the streaming data using Amazon Kinesis Data Firehos

- K. Map the delivery stream as an inputsource for Amazon Kinesis Data Analytic

- L. Write a SQL query to run in real time against the streaming datawith the Amazon Random Cut Forest (RCF) SQL extension to calculate anomaly scores for each record using a sliding window.

Answer: D

NEW QUESTION 5

A Data Scientist is developing a binary classifier to predict whether a patient has a particular disease on a series of test results. The Data Scientist has data on 400 patients randomly selected from the population. The disease is seen in 3% of the population.

Which cross-validation strategy should the Data Scientist adopt?

- A. A k-fold cross-validation strategy with k=5

- B. A stratified k-fold cross-validation strategy with k=5

- C. A k-fold cross-validation strategy with k=5 and 3 repeats

- D. An 80/20 stratified split between training and validation

Answer: B

NEW QUESTION 6

A data scientist has a dataset of machine part images stored in Amazon Elastic File System (Amazon EFS). The data scientist needs to use Amazon SageMaker to create and train an image classification machine learning model based on this dataset. Because of budget and time constraints, management wants the data scientist to create and train a model with the least number of steps and integration work required.

How should the data scientist meet these requirements?

- A. Mount the EFS file system to a SageMaker notebook and run a script that copies the data to an Amazon FSx for Lustre file syste

- B. Run the SageMaker training job with the FSx for Lustre file system as the data source.

- C. Launch a transient Amazon EMR cluste

- D. Configure steps to mount the EFS file system and copy the data to an Amazon S3 bucket by using S3DistC

- E. Run the SageMaker training job with Amazon S3 as the data source.

- F. Mount the EFS file system to an Amazon EC2 instance and use the AWS CLI to copy the data to an Amazon S3 bucke

- G. Run the SageMaker training job with Amazon S3 as the data source.

- H. Run a SageMaker training job with an EFS file system as the data source.

Answer: A

NEW QUESTION 7

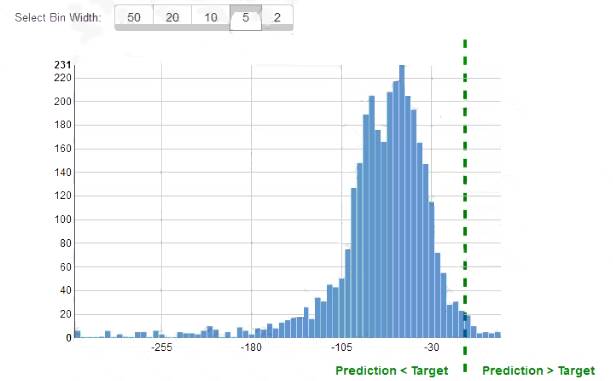

While reviewing the histogram for residuals on regression evaluation data a Machine Learning Specialist notices that the residuals do not form a zero-centered bell shape as shown What does this mean?

- A. The model might have prediction errors over a range of target values.

- B. The dataset cannot be accurately represented using the regression model

- C. There are too many variables in the model

- D. The model is predicting its target values perfectly.

Answer: D

NEW QUESTION 8

A large company has developed a B1 application that generates reports and dashboards using data collected from various operational metrics The company wants to provide executives with an enhanced experience so they can use natural language to get data from the reports The company wants the executives to be able ask questions using written and spoken interlaces

Which combination of services can be used to build this conversational interface? (Select THREE )

- A. Alexa for Business

- B. Amazon Connect

- C. Amazon Lex

- D. Amazon Poly

- E. Amazon Comprehend

- F. Amazon Transcribe

Answer: BEF

NEW QUESTION 9

A company will use Amazon SageMaker to train and host a machine learning (ML) model for a marketing campaign. The majority of data is sensitive customer data. The data must be encrypted at rest. The company wants AWS to maintain the root of trust for the master keys and wants encryption key usage to be logged.

Which implementation will meet these requirements?

- A. Use encryption keys that are stored in AWS Cloud HSM to encrypt the ML data volumes, and to encryptthe model artifacts and data in Amazon S3.

- B. Use SageMaker built-in transient keys to encrypt the ML data volume

- C. Enable default encryption for new Amazon Elastic Block Store (Amazon EBS) volumes.

- D. Use customer managed keys in AWS Key Management Service (AWS KMS) to encrypt the ML data volumes, and to encrypt the model artifacts and data in Amazon S3.

- E. Use AWS Security Token Service (AWS STS) to create temporary tokens to encrypt the ML storage volumes, and to encrypt the model artifacts and data in Amazon S3.

Answer: C

NEW QUESTION 10

A bank wants to launch a low-rate credit promotion. The bank is located in a town that recently experienced economic hardship. Only some of the bank's customers were affected by the crisis, so the bank's credit team must identify which customers to target with the promotion. However, the credit team wants to make sure that loyal customers' full credit history is considered when the decision is made.

The bank's data science team developed a model that classifies account transactions and understands credit eligibility. The data science team used the XGBoost algorithm to train the model. The team used 7 years of bank transaction historical data for training and hyperparameter tuning over the course of several days.

The accuracy of the model is sufficient, but the credit team is struggling to explain accurately why the model denies credit to some customers. The credit team has almost no skill in data science.

What should the data science team do to address this issue in the MOST operationally efficient manner?

- A. Use Amazon SageMaker Studio to rebuild the mode

- B. Create a notebook that uses the XGBoost training container to perform model trainin

- C. Deploy the model at an endpoin

- D. Enable Amazon SageMaker Model Monitor to store inference

- E. Use the inferences to create Shapley values that help explain model behavio

- F. Create a chart that shows features and SHapley Additive explanation (SHAP) values to explain to the credit team how the features affect the model outcomes.

- G. Use Amazon SageMaker Studio to rebuild the mode

- H. Create a notebook that uses the XGBoost training container to perform model trainin

- I. Activate Amazon SageMaker Debugger, and configure it to calculate and collect Shapley value

- J. Create a chart that shows features and SHapley Additive explanation (SHAP) values to explain to the credit team how the features affect the model outcomes.

- K. Create an Amazon SageMaker notebook instanc

- L. Use the notebook instance and the XGBoost library to locally retrain the mode

- M. Use the plot_importance() method in the Python XGBoost interface to create a feature importance char

- N. Use that chart to explain to the credit team how the features affect the model outcomes.

- O. Use Amazon SageMaker Studio to rebuild the mode

- P. Create a notebook that uses the XGBoost training container to perform model trainin

- Q. Deploy the model at an endpoin

- R. Use Amazon SageMakerProcessing to post-analyze the model and create a feature importance explainability chart automatically for the credit team.

Answer: C

NEW QUESTION 11

A machine learning (ML) specialist wants to create a data preparation job that uses a PySpark script with complex window aggregation operations to create data for training and testing. The ML specialist needs to evaluate the impact of the number of features and the sample count on model performance.

Which approach should the ML specialist use to determine the ideal data transformations for the model?

- A. Add an Amazon SageMaker Debugger hook to the script to capture key metric

- B. Run the script as an AWS Glue job.

- C. Add an Amazon SageMaker Experiments tracker to the script to capture key metric

- D. Run the script as an AWS Glue job.

- E. Add an Amazon SageMaker Debugger hook to the script to capture key parameter

- F. Run the script as a SageMaker processing job.

- G. Add an Amazon SageMaker Experiments tracker to the script to capture key parameter

- H. Run the script as a SageMaker processing job.

Answer: B

NEW QUESTION 12

A Data Scientist needs to create a serverless ingestion and analytics solution for high-velocity, real-time streaming data.

The ingestion process must buffer and convert incoming records from JSON to a query-optimized, columnar format without data loss. The output datastore must be highly available, and Analysts must be able to run SQL queries against the data and connect to existing business intelligence dashboards.

Which solution should the Data Scientist build to satisfy the requirements?

- A. Create a schema in the AWS Glue Data Catalog of the incoming data forma

- B. Use an Amazon Kinesis Data Firehose delivery stream to stream the data and transform the data to Apache Parquet or ORC format using the AWS Glue Data Catalog before delivering to Amazon S3. Have the Analysts query the data directly from Amazon S3 using Amazon Athena, and connect to Bl tools using the Athena Java Database Connectivity (JDBC) connector.

- C. Write each JSON record to a staging location in Amazon S3. Use the S3 Put event to trigger an AWS Lambda function that transforms the data into Apache Parquet or ORC format and writes the data to a processed data location in Amazon S3. Have the Analysts query the data directly from Amazon S3 using Amazon Athena, and connect to Bl tools using the Athena Java Database Connectivity (JDBC) connector.

- D. Write each JSON record to a staging location in Amazon S3. Use the S3 Put event to trigger an AWS Lambda function that transforms the data into Apache Parquet or ORC format and inserts it into an Amazon RDS PostgreSQL databas

- E. Have the Analysts query and run dashboards from the RDS database.

- F. Use Amazon Kinesis Data Analytics to ingest the streaming data and perform real-time SQL queries to convert the records to Apache Parquet before delivering to Amazon S3. Have the Analysts query the data directly from Amazon S3 using Amazon Athena and connect to Bl tools using the Athena Java Database Connectivity (JDBC) connector.

Answer: A

NEW QUESTION 13

An interactive online dictionary wants to add a widget that displays words used in similar contexts. A Machine Learning Specialist is asked to provide word features for the downstream nearest neighbor model powering the widget.

What should the Specialist do to meet these requirements?

- A. Create one-hot word encoding vectors.

- B. Produce a set of synonyms for every word using Amazon Mechanical Turk.

- C. Create word embedding factors that store edit distance with every other word.

- D. Download word embedding’s pre-trained on a large corpus.

Answer: D

NEW QUESTION 14

A retail company is using Amazon Personalize to provide personalized product recommendations for its customers during a marketing campaign. The company sees a significant increase in sales of recommended items to existing customers immediately after deploying a new solution version, but these sales decrease a short time after deployment. Only historical data from before the marketing campaign is available for training.

How should a data scientist adjust the solution?

- A. Use the event tracker in Amazon Personalize to include real-time user interactions.

- B. Add user metadata and use the HRNN-Metadata recipe in Amazon Personalize.

- C. Implement a new solution using the built-in factorization machines (FM) algorithm in Amazon SageMaker.

- D. Add event type and event value fields to the interactions dataset in Amazon Personalize.

Answer: A

NEW QUESTION 15

A city wants to monitor its air quality to address the consequences of air pollution A Machine Learning Specialist needs to forecast the air quality in parts per million of contaminates for the next 2 days in the city As this is a prototype, only daily data from the last year is available

Which model is MOST likely to provide the best results in Amazon SageMaker?

- A. Use the Amazon SageMaker k-Nearest-Neighbors (kNN) algorithm on the single time series consisting of the full year of data with a predictor_type of regressor.

- B. Use Amazon SageMaker Random Cut Forest (RCF) on the single time series consisting of the full year of data.

- C. Use the Amazon SageMaker Linear Learner algorithm on the single time series consisting of the full yearof data with a predictor_type of regressor.

- D. Use the Amazon SageMaker Linear Learner algorithm on the single time series consisting of the full yearof data with a predictor_type of classifier.

Answer: C

NEW QUESTION 16

A retail company wants to combine its customer orders with the product description data from its product catalog. The structure and format of the records in each dataset is different. A data analyst tried to use a spreadsheet to combine the datasets, but the effort resulted in duplicate records and records that were not properly combined. The company needs a solution that it can use to combine similar records from the two datasets and remove any duplicates.

Which solution will meet these requirements?

- A. Use an AWS Lambda function to process the dat

- B. Use two arrays to compare equal strings in the fields from the two datasets and remove any duplicates.

- C. Create AWS Glue crawlers for reading and populating the AWS Glue Data Catalo

- D. Call the AWS Glue SearchTables API operation to perform a fuzzy-matching search on the two datasets, and cleanse the data accordingly.

- E. Create AWS Glue crawlers for reading and populating the AWS Glue Data Catalo

- F. Use the FindMatches transform to cleanse the data.

- G. Create an AWS Lake Formation custom transfor

- H. Run a transformation for matching products from the Lake Formation console to cleanse the data automatically.

Answer: D

NEW QUESTION 17

......

Thanks for reading the newest AWS-Certified-Machine-Learning-Specialty exam dumps! We recommend you to try the PREMIUM Thedumpscentre.com AWS-Certified-Machine-Learning-Specialty dumps in VCE and PDF here: https://www.thedumpscentre.com/AWS-Certified-Machine-Learning-Specialty-dumps/ (307 Q&As Dumps)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (121-130)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (91-100)

- [2021-New] Amazon AWS-Certified-DevOps-Engineer-Professional Dumps With Update Exam Questions (31-40)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (141-150)

- [2021-New] Amazon AWS-Certified-DevOps-Engineer-Professional Dumps With Update Exam Questions (1-10)

- [2021-New] Amazon AWS-Certified-Developer-Associate Dumps With Update Exam Questions (71-80)

- [2021-New] Amazon AWS-Certified-Developer-Associate Dumps With Update Exam Questions (11-20)

- [2021-New] Amazon AWS-Certified-Solutions-Architect-Professional Dumps With Update Exam Questions (1-10)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (91-100)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (191-200)