AWS-Certified-Machine-Learning-Specialty Exam Questions - Online Test

AWS-Certified-Machine-Learning-Specialty Premium VCE File

150 Lectures, 20 Hours

Want to know Certleader AWS-Certified-Machine-Learning-Specialty Exam practice test features? Want to lear more about Amazon AWS Certified Machine Learning - Specialty certification experience? Study Printable Amazon AWS-Certified-Machine-Learning-Specialty answers to Regenerate AWS-Certified-Machine-Learning-Specialty questions at Certleader. Gat a success with an absolute guarantee to pass Amazon AWS-Certified-Machine-Learning-Specialty (AWS Certified Machine Learning - Specialty) test on your first attempt.

Free demo questions for Amazon AWS-Certified-Machine-Learning-Specialty Exam Dumps Below:

NEW QUESTION 1

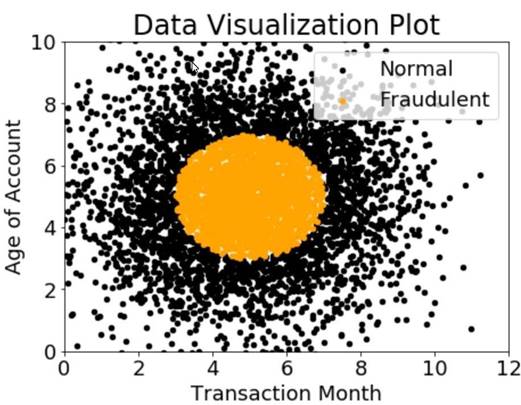

A company wants to classify user behavior as either fraudulent or normal. Based on internal research, a Machine Learning Specialist would like to build a binary classifier based on two features: age of account and transaction month. The class distribution for these features is illustrated in the figure provided.

Based on this information which model would have the HIGHEST accuracy?

- A. Long short-term memory (LSTM) model with scaled exponential linear unit (SELL))

- B. Logistic regression

- C. Support vector machine (SVM) with non-linear kernel

- D. Single perceptron with tanh activation function

Answer: C

NEW QUESTION 2

A company is building a new version of a recommendation engine. Machine learning (ML) specialists need to keep adding new data from users to improve personalized recommendations. The ML specialists gather data from the users’ interactions on the platform and from sources such as external websites and social media.

The pipeline cleans, transforms, enriches, and compresses terabytes of data daily, and this data is stored in Amazon S3. A set of Python scripts was coded to do the job and is stored in a large Amazon EC2 instance. The whole process takes more than 20 hours to finish, with each script taking at least an hour. The company wants to move the scripts out of Amazon EC2 into a more managed solution that will eliminate the need to maintain servers.

Which approach will address all of these requirements with the LEAST development effort?

- A. Load the data into an Amazon Redshift cluste

- B. Execute the pipeline by using SQ

- C. Store the results in Amazon S3.

- D. Load the data into Amazon DynamoD

- E. Convert the scripts to an AWS Lambda functio

- F. Execute the pipeline by triggering Lambda execution

- G. Store the results in Amazon S3.

- H. Create an AWS Glue jo

- I. Convert the scripts to PySpar

- J. Execute the pipelin

- K. Store the results in Amazon S3.

- L. Create a set of individual AWS Lambda functions to execute each of the script

- M. Build a step function by using the AWS Step Functions Data Science SD

- N. Store the results in Amazon S3.

Answer: B

NEW QUESTION 3

An online reseller has a large, multi-column dataset with one column missing 30% of its data A Machine Learning Specialist believes that certain columns in the dataset could be used to reconstruct the missing data.

Which reconstruction approach should the Specialist use to preserve the integrity of the dataset?

- A. Listwise deletion

- B. Last observation carried forward

- C. Multiple imputation

- D. Mean substitution

Answer: C

NEW QUESTION 4

A company wants to use automatic speech recognition (ASR) to transcribe messages that are less than 60 seconds long from a voicemail-style application. The company requires the correct identification of 200 unique product names, some of which have unique spellings or pronunciations.

The company has 4,000 words of Amazon SageMaker Ground Truth voicemail transcripts it can use to customize the chosen ASR model. The company needs to ensure that everyone can update their customizations multiple times each hour.

Which approach will maximize transcription accuracy during the development phase?

- A. Use a voice-driven Amazon Lex bot to perform the ASR customizatio

- B. Create customer slots within the bot that specifically identify each of the required product name

- C. Use the Amazon Lex synonym mechanism to provide additional variations of each product name as mis-transcriptions are identified in development.

- D. Use Amazon Transcribe to perform the ASR customizatio

- E. Analyze the word confidence scores in the transcript, and automatically create or update a custom vocabulary file with any word that has a confidence score below an acceptable threshold valu

- F. Use this updated custom vocabulary file in all future transcription tasks.

- G. Create a custom vocabulary file containing each product name with phonetic pronunciations, and use it with Amazon Transcribe to perform the ASR customizatio

- H. Analyze the transcripts and manually update the custom vocabulary file to include updated or additional entries for those names that are not being correctly identified.

- I. Use the audio transcripts to create a training dataset and build an Amazon Transcribe custom language mode

- J. Analyze the transcripts and update the training dataset with a manually corrected version of transcripts where product names are not being transcribed correctl

- K. Create an updated custom language model.

Answer: A

NEW QUESTION 5

An e-commerce company needs a customized training model to classify images of its shirts and pants products The company needs a proof of concept in 2 to 3 days with good accuracy Which compute choice should the Machine Learning Specialist select to train and achieve good accuracy on the model quickly?

- A. m5 4xlarge (general purpose)

- B. r5.2xlarge (memory optimized)

- C. p3.2xlarge (GPU accelerated computing)

- D. p3 8xlarge (GPU accelerated computing)

Answer: C

NEW QUESTION 6

A Data Scientist is developing a machine learning model to predict future patient outcomes based on information collected about each patient and their treatment plans. The model should output a continuous value as its prediction. The data available includes labeled outcomes for a set of 4,000 patients. The study was conducted on a group of individuals over the age of 65 who have a particular disease that is known to worsen with age.

Initial models have performed poorly. While reviewing the underlying data, the Data Scientist notices that, out of 4,000 patient observations, there are 450 where the patient age has been input as 0. The other features for these observations appear normal compared to the rest of the sample population.

How should the Data Scientist correct this issue?

- A. Drop all records from the dataset where age has been set to 0.

- B. Replace the age field value for records with a value of 0 with the mean or median value from the dataset.

- C. Drop the age feature from the dataset and train the model using the rest of the features.

- D. Use k-means clustering to handle missing features.

Answer: A

NEW QUESTION 7

A manufacturing company uses machine learning (ML) models to detect quality issues. The models use images that are taken of the company's product at the end of each production step. The company has thousands of machines at the production site that generate one image per second on average.

The company ran a successful pilot with a single manufacturing machine. For the pilot, ML specialists used an industrial PC that ran AWS IoT Greengrass with a long-running AWS Lambda function that uploaded the images to Amazon S3. The uploaded images invoked a Lambda function that was written in Python to perform inference by using an Amazon SageMaker endpoint that ran a custom model. The inference results were forwarded back to a web service that was hosted at the production site to prevent faulty products from being shipped.

The company scaled the solution out to all manufacturing machines by installing similarly configured industrial PCs on each production machine. However, latency for predictions increased beyond acceptable limits. Analysis shows that the internet connection is at its capacity limit.

How can the company resolve this issue MOST cost-effectively?

- A. Set up a 10 Gbps AWS Direct Connect connection between the production site and the nearest AWS Regio

- B. Use the Direct Connect connection to upload the image

- C. Increase the size of the instances and the number of instances that are used by the SageMaker endpoint.

- D. Extend the long-running Lambda function that runs on AWS IoT Greengrass to compress the images and upload the compressed files to Amazon S3. Decompress the files by using a separate Lambda function that invokes the existing Lambda function to run the inference pipeline.

- E. Use auto scaling for SageMake

- F. Set up an AWS Direct Connect connection between the production site and the nearest AWS Regio

- G. Use the Direct Connect connection to upload the images.

- H. Deploy the Lambda function and the ML models onto the AWS IoT Greengrass core that is running on the industrial PCs that are installed on each machin

- I. Extend the long-running Lambda function that runs on AWS IoT Greengrass to invoke the Lambda function with the captured images and run the inference on the edge component that forwards the results directly to the web service.

Answer: D

NEW QUESTION 8

A Machine Learning Specialist is implementing a full Bayesian network on a dataset that describes public transit in New York City. One of the random variables is discrete, and represents the number of minutes New Yorkers wait for a bus given that the buses cycle every 10 minutes, with a mean of 3 minutes.

Which prior probability distribution should the ML Specialist use for this variable?

- A. Poisson distribution ,

- B. Uniform distribution

- C. Normal distribution

- D. Binomial distribution

Answer: A

NEW QUESTION 9

A data scientist is using the Amazon SageMaker Neural Topic Model (NTM) algorithm to build a model that recommends tags from blog posts. The raw blog post data is stored in an Amazon S3 bucket in JSON format. During model evaluation, the data scientist discovered that the model recommends certain stopwords such as "a," "an,” and "the" as tags to certain blog posts, along with a few rare words that are present only in certain blog entries. After a few iterations of tag review with the content team, the data scientist notices that the rare words are unusual but feasible. The data scientist also must ensure that the tag recommendations of the generated model do not include the stopwords.

What should the data scientist do to meet these requirements?

- A. Use the Amazon Comprehend entity recognition API operation

- B. Remove the detected words from the blog post dat

- C. Replace the blog post data source in the S3 bucket.

- D. Run the SageMaker built-in principal component analysis (PCA) algorithm with the blog post data from the S3 bucket as the data sourc

- E. Replace the blog post data in the S3 bucket with the results of the training job.

- F. Use the SageMaker built-in Object Detection algorithm instead of the NTM algorithm for the training job to process the blog post data.

- G. Remove the stopwords from the blog post data by using the Count Vectorizer function in the scikit-learnlibrar

- H. Replace the blog post data in the S3 bucket with the results of the vectorizer.

Answer: D

NEW QUESTION 10

A Machine Learning Specialist is building a prediction model for a large number of features using linear models, such as linear regression and logistic regression During exploratory data analysis the Specialist observes that many features are highly correlated with each other This may make the model unstable

What should be done to reduce the impact of having such a large number of features?

- A. Perform one-hot encoding on highly correlated features

- B. Use matrix multiplication on highly correlated features.

- C. Create a new feature space using principal component analysis (PCA)

- D. Apply the Pearson correlation coefficient

Answer: B

NEW QUESTION 11

A Machine Learning Specialist trained a regression model, but the first iteration needs optimizing. The Specialist needs to understand whether the model is more frequently overestimating or underestimating the target.

What option can the Specialist use to determine whether it is overestimating or underestimating the target value?

- A. Root Mean Square Error (RMSE)

- B. Residual plots

- C. Area under the curve

- D. Confusion matrix

Answer: B

NEW QUESTION 12

A Machine Learning Specialist is configuring Amazon SageMaker so multiple Data Scientists can access notebooks, train models, and deploy endpoints. To ensure the best operational performance, the Specialist needs to be able to track how often the Scientists are deploying models, GPU and CPU utilization on the deployed SageMaker endpoints, and all errors that are generated when an endpoint is invoked.

Which services are integrated with Amazon SageMaker to track this information? (Select TWO.)

- A. AWS CloudTrail

- B. AWS Health

- C. AWS Trusted Advisor

- D. Amazon CloudWatch

- E. AWS Config

Answer: AD

NEW QUESTION 13

A financial services company is building a robust serverless data lake on Amazon S3. The data lake should be flexible and meet the following requirements:

* Support querying old and new data on Amazon S3 through Amazon Athena and Amazon Redshift Spectrum.

* Support event-driven ETL pipelines.

* Provide a quick and easy way to understand metadata. Which approach meets trfese requirements?

- A. Use an AWS Glue crawler to crawl S3 data, an AWS Lambda function to trigger an AWS Glue ETL job, and an AWS Glue Data catalog to search and discover metadata.

- B. Use an AWS Glue crawler to crawl S3 data, an AWS Lambda function to trigger an AWS Batch job, and an external Apache Hive metastore to search and discover metadata.

- C. Use an AWS Glue crawler to crawl S3 data, an Amazon CloudWatch alarm to trigger an AWS Batch job, and an AWS Glue Data Catalog to search and discover metadata.

- D. Use an AWS Glue crawler to crawl S3 data, an Amazon CloudWatch alarm to trigger an AWS Glue ETL job, and an external Apache Hive metastore to search and discover metadata.

Answer: A

NEW QUESTION 14

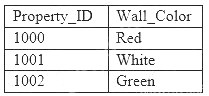

A machine learning specialist is developing a regression model to predict rental rates from rental listings. A variable named Wall_Color represents the most prominent exterior wall color of the property. The following is the sample data, excluding all other variables:

The specialist chose a model that needs numerical input data.

Which feature engineering approaches should the specialist use to allow the regression model to learn from the Wall_Color data? (Choose two.)

- A. Apply integer transformation and set Red = 1, White = 5, and Green = 10.

- B. Add new columns that store one-hot representation of colors.

- C. Replace the color name string by its length.

- D. Create three columns to encode the color in RGB format.

- E. Replace each color name by its training set frequency.

Answer: AD

NEW QUESTION 15

A Machine Learning Specialist uploads a dataset to an Amazon S3 bucket protected with server-side encryption using AWS KMS.

How should the ML Specialist define the Amazon SageMaker notebook instance so it can read the same dataset from Amazon S3?

- A. Define security group(s) to allow all HTTP inbound/outbound traffic and assign those security group(s) tothe Amazon SageMaker notebook instance.

- B. onfigure the Amazon SageMaker notebook instance to have access to the VP

- C. Grant permission in the KMS key policy to the notebook’s KMS role.

- D. Assign an IAM role to the Amazon SageMaker notebook with S3 read access to the datase

- E. Grant permission in the KMS key policy to that role.

- F. Assign the same KMS key used to encrypt data in Amazon S3 to the Amazon SageMaker notebook instance.

Answer: D

NEW QUESTION 16

A Data Engineer needs to build a model using a dataset containing customer credit card information. How can the Data Engineer ensure the data remains encrypted and the credit card information is secure?

- A. Use a custom encryption algorithm to encrypt the data and store the data on an Amazon SageMaker instance in a VP

- B. Use the SageMaker DeepAR algorithm to randomize the credit card numbers.

- C. Use an IAM policy to encrypt the data on the Amazon S3 bucket and Amazon Kinesis to automatically discard credit card numbers and insert fake credit card numbers.

- D. Use an Amazon SageMaker launch configuration to encrypt the data once it is copied to the SageMaker instance in a VP

- E. Use the SageMaker principal component analysis (PCA) algorithm to reduce the length of the credit card numbers.

- F. Use AWS KMS to encrypt the data on Amazon S3 and Amazon SageMaker, and redact the credit card numbers from the customer data with AWS Glue.

Answer: D

NEW QUESTION 17

......

P.S. Easily pass AWS-Certified-Machine-Learning-Specialty Exam with 307 Q&As Dumpscollection.com Dumps & pdf Version, Welcome to Download the Newest Dumpscollection.com AWS-Certified-Machine-Learning-Specialty Dumps: https://www.dumpscollection.net/dumps/AWS-Certified-Machine-Learning-Specialty/ (307 New Questions)

- All About Precise AWS-Certified-Solutions-Architect-Professional Testing Engine

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (281-290)

- [2021-New] Amazon AWS-Certified-Developer-Associate Dumps With Update Exam Questions (21-30)

- [2021-New] Amazon AWS-Certified-Developer-Associate Dumps With Update Exam Questions (31-40)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (141-150)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (41-50)

- [2021-New] Amazon AWS-Certified-Developer-Associate Dumps With Update Exam Questions (81-90)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (131-140)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (131-140)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (121-130)