AWS-Certified-Solutions-Architect-Professional Exam Questions - Online Test

AWS-Certified-Solutions-Architect-Professional Premium VCE File

150 Lectures, 20 Hours

Cause all that matters here is passing the Amazon AWS-Certified-Solutions-Architect-Professional exam. Cause all that you need is a high score of AWS-Certified-Solutions-Architect-Professional Amazon AWS Certified Solutions Architect Professional exam. The only one thing you need to do is downloading Pass4sure AWS-Certified-Solutions-Architect-Professional exam study guides now. We will not let you down with our money-back guarantee.

Amazon AWS-Certified-Solutions-Architect-Professional Free Dumps Questions Online, Read and Test Now.

NEW QUESTION 1

A health insurance company stores personally identifiable information (PII) in an Amazon S3 bucket. The company uses server-side encryption with S3 managed encryption keys (SSE-S3) to encrypt the objects. According to a new requirement, all current and future objects in the S3 bucket must be encrypted by keys that the company’s security team manages. The S3 bucket does not have versioning enabled. Which solution will meet these requirements?

- A. In the S3 bucket properties, change the default encryption to SSE-S3 with a customer managed ke

- B. Use the AWS CLI to re-upload all objects in the S3 bucke

- C. Set an S3 bucket policy to deny unencrypted PutObject requests.

- D. In the S3 bucket properties, change the default encryption to server-side encryption with AWS KMS managed encryption keys (SSE-KMS). Set an S3 bucket policy to deny unencrypted PutObject request

- E. Use the AWS CLI to re-upload all objects in the S3 bucket.

- F. In the S3 bucket properties, change the default encryption to server-side encryption with AWS KMS managed encryption keys (SSE-KMS). Set an S3 bucket policy to automatically encrypt objects on GetObject and PutObject requests.

- G. In the S3 bucket properties, change the default encryption to AES-256 with a customer managed key.Attach a policy to deny unencrypted PutObject requests to any entities that access the S3 bucke

- H. Use the AWS CLI to re-upload all objects in the S3 bucket.

Answer: D

Explanation:

https://docs.aws.amazon.com/AmazonS3/latest/userguide/ServerSideEncryptionCustomerKeys.html Clearly says we need following header for SSE-C x-amz-server-side-encryption-customer-algorithm Use this header to specify the encryption algorithm. The header value must be AES256.

NEW QUESTION 2

A company has an loT platform that runs in an on-premises environment. The platform consists of a server that connects to loT devices by using the MQTT protocol. The platform collects telemetry data from the devices at least once every 5 minutes The platform also stores device metadata in a MongoDB cluster

An application that is installed on an on-premises machine runs periodic jobs to aggregate and transform the telemetry and device metadata The application creates reports that users view by using another web application that runs on the same on-premises machine The periodic jobs take 120-600 seconds to run However, the web application is always running.

The company is moving the platform to AWS and must reduce the operational overhead of the stack. Which combination of steps will meet these requirements with the LEAST operational overhead? (Select

THREE.)

- A. Use AWS Lambda functions to connect to the loT devices

- B. Configure the loT devices to publish to AWS loT Core

- C. Write the metadata to a self-managed MongoDB database on an Amazon EC2 instance

- D. Write the metadata to Amazon DocumentDB (with MongoDB compatibility)

- E. Use AWS Step Functions state machines with AWS Lambda tasks to prepare the reports and to write the reports to Amazon S3 Use Amazon CloudFront with an S3 origin to serve the reports

- F. Use an Amazon Elastic Kubernetes Service (Amazon EKS) cluster with Amazon EC2 instances to prepare the reports Use an ingress controller in the EKS cluster to serve the reports

Answer: BDE

Explanation:

https://aws.amazon.com/step-functions/use-cases/

NEW QUESTION 3

A company hosts a Git repository in an on-premises data center. The company uses webhooks to invoke functionality that runs in the AWS Cloud. The company hosts the webhook logic on a set of Amazon EC2 instances in an Auto Scaling group that the company set as a target for an Application Load Balancer (ALB). The Git server calls the ALB for the configured webhooks. The company wants to move the solution to a serverless architecture.

Which solution will meet these requirements with the LEAST operational overhead?

- A. For each webhook, create and configure an AWS Lambda function UR

- B. Update the Git servers to call the individual Lambda function URLs.

- C. Create an Amazon API Gateway HTTP AP

- D. Implement each webhook logic in a separate AWS Lambda functio

- E. Update the Git servers to call the API Gateway endpoint.

- F. Deploy the webhook logic to AWS App Runne

- G. Create an ALB, and set App Runner as the target.Update the Git servers to call the ALB endpoint.

- H. Containerize the webhook logi

- I. Create an Amazon Elastic Container Service (Amazon ECS) cluster, and run the webhook logic in AWS Fargat

- J. Create an Amazon API Gateway REST API, and set Fargate as the targe

- K. Update the Git servers to call the API Gateway endpoint.

Answer: B

Explanation:

https://aws.amazon.com/solutions/implementations/git-to-s3-using-webhooks/ https://medium.com/mindorks/building-webhook-is-easy-using-aws-lambda-and-api-gateway-56f5e5c3a596

NEW QUESTION 4

A life sciences company is using a combination of open source tools to manage data analysis workflows and Docker containers running on servers in its on-premises data center to process genomics data Sequencing data is generated and stored on a local storage area network (SAN), and then the data is processed. The research and development teams are running into capacity issues and have decided to re-architect their genomics analysis platform on AWS to scale based on workload demands and reduce the turnaround time from weeks to days

The company has a high-speed AWS Direct Connect connection Sequencers will generate around 200 GB of data for each genome, and individual jobs can take several hours to process the data with ideal compute capacity. The end result will be stored in Amazon S3. The company is expecting 10-15 job requests each day

Which solution meets these requirements?

- A. Use regularly scheduled AWS Snowball Edge devices to transfer the sequencing data into AWS When AWS receives the Snowball Edge device and the data is loaded into Amazon S3 use S3 events to trigger an AWS Lambda function to process the data

- B. Use AWS Data Pipeline to transfer the sequencing data to Amazon S3 Use S3 events to trigger an Amazon EC2 Auto Scaling group to launch custom-AMI EC2 instances running the Docker containers to process the data

- C. Use AWS DataSync to transfer the sequencing data to Amazon S3 Use S3 events to trigger an AWS Lambda function that starts an AWS Step Functions workflow Store the Docker images in Amazon Elastic Container Registry (Amazon ECR) and trigger AWS Batch to run the container and process the sequencing data

- D. Use an AWS Storage Gateway file gateway to transfer the sequencing data to Amazon S3 Use S3 events to trigger an AWS Batch job that runs on Amazon EC2 instances running the Docker containers to process the data

Answer: C

Explanation:

AWS DataSync can be used to transfer the sequencing data to Amazon S3, which is a more efficient and faster method than using Snowball Edge devices. Once the data is in S3, S3 events can trigger an AWS Lambda function that starts an AWS Step Functions workflow. The Docker images can be stored in Amazon Elastic Container Registry (Amazon ECR) and AWS Batch can be used to run the container and process the sequencing data.

NEW QUESTION 5

A company is building an image service on the web that will allow users to upload and search random photos. At peak usage, up to 10.000 users worldwide will upload their images. The service will then overlay text on the uploaded images, which will then be published on the company website.

Which design should a solutions architect implement?

- A. Store the uploaded images in Amazon Elastic File System (Amazon EFS). Send application log information about each image to Amazon CloudWatch Logs Create a fleet of Amazon EC2 instances that use CloudWatch Logs to determine which images need to be processed Place processed images in another directory in Amazon EF

- B. Enable Amazon CloudFront and configure the origin to be the one of the EC2 instances in the fleet

- C. Store the uploaded images in an Amazon S3 bucket and configure an S3 bucket event notification to send a message to Amazon Simple Notification Service (Amazon SNS) Create a fleet of Amazon EC2 instances behind an Application Load Balancer (ALB) to pull messages from Amazon SNS to process the images and place them in Amazon Elastic File System (Amazon EFS) Use Amazon CloudWatch metrics for the SNS message volume to scale out EC2 instance

- D. Enable Amazon CloudFront and configure the origin to be the ALB in front of the EC2 instances

- E. Store the uploaded images in an Amazon S3 bucket and configure an S3 bucket event notification to send a message to the Amazon Simple Queue Service (Amazon SQS) queue Create a fleet of Amazon EC2 instances to pull messages from the SQS queue to process the images and place them in another S3 bucke

- F. Use Amazon CloudWatch metncs for queue depth to scale out EC2 instances Enable Amazon CloudFront and configure the origin to be the S3 bucket that contains the processed images.

- G. Store the uploaded images on a shared Amazon Elastic Block Store (Amazon EBS) volume amounted to a fleet of Amazon EC2 Spot instance

- H. Create an AmazonDynamoDB table that contains information about each uploaded image and whether it has been processed Use an Amazon EventBndge rule to scale out EC2 instance

- I. Enable Amazon CloudFront and configure the origin to reference an Elastic Load Balancer in front of the fleet of EC2 instances.

Answer: C

Explanation:

(Store the uploaded images in an S3 bucket and use S3 event notification with SQS queue) is the most suitable design. Amazon S3 provides highly scalable and durable storage for the uploaded images. Configuring S3 event notifications to send messages to an SQS queue allows for decoupling the processing of images from the upload process. A fleet of EC2 instances can pull messages from the SQS queue to process the images and store them in another S3 bucket. Scaling out the EC2 instances based on SQS queue depth using CloudWatch metrics ensures efficient utilization of resources. Enabling Amazon CloudFront with the origin set to the S3 bucket containing the processed images improves the global availability and performance of image delivery.

NEW QUESTION 6

A company runs its application on Amazon EC2 instances and AWS Lambda functions. The EC2 instances experience a continuous and stable load. The Lambda functions experience a varied and unpredictable load. The application includes a caching layer that uses an Amazon MemoryDB for Redis cluster.

A solutions architect must recommend a solution to minimize the company's overall monthly costs. Which solution will meet these requirements?

- A. Purchase an EC2 Instance Savings Plan to cover the EC2 instance

- B. Purchase a Compute Savings Plan for Lambda to cover the minimum expected consumption of the Lambda function

- C. Purchase reserved nodes to cover the MemoryDB cache nodes.

- D. Purchase a Compute Savings Plan to cover the EC2 instance

- E. Purchase Lambda reserved concurrency to cover the expected Lambda usag

- F. Purchase reserved nodes to cover the MemoryDB cache nodes.

- G. Purchase a Compute Savings Plan to cover the entire expected cost of the EC2 instances, Lambda functions, and MemoryDB cache nodes.

- H. Purchase a Compute Savings Plan to cover the EC2 instances and the MemoryDB cache nodes.Purchase Lambda reserved concurrency to cover the expected Lambda usage.

Answer: A

Explanation:

This option uses different types of savings plans and reserved nodes to minimize the company’s overall monthly costs for running its application on EC2 instances, Lambda functions, and MemoryDB cache nodes. Savings plans are flexible pricing models that offer significant savings on AWS usage (up to 72%) in exchange for a commitment of a consistent amount of usage (measured in $/hour) for a one-year or three-year term. There are two types of savings plans: Compute Savings Plans and EC2 Instance Savings Plans. Compute Savings Plans apply to any compute usage across EC2 instances, Fargate containers, Lambda functions, SageMaker notebooks, and ECS tasks. EC2 Instance Savings Plans apply to a specific instance family within a region and provide more savings than Compute Savings Plans (up to 66% versus up to 54%). Reserved nodes are similar to savings plans but apply only to MemoryDB cache nodes. They offer up to 55% savings compared to on-demand pricing.

NEW QUESTION 7

A retail company needs to provide a series of data files to another company, which is its business partner These files are saved in an Amazon S3 bucket under Account A. which belongs to the retail company. The business partner company wants one of its 1AM users. User_DataProcessor. to access the files from its own AWS account (Account B).

Which combination of steps must the companies take so that User_DataProcessor can access the S3 bucket successfully? (Select TWO.)

- A. Turn on the cross-origin resource sharing (CORS) feature for the S3 bucket in Account

- B. In Account

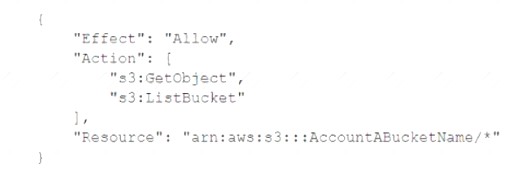

- C. set the S3 bucket policy to the following:

- D. In Account

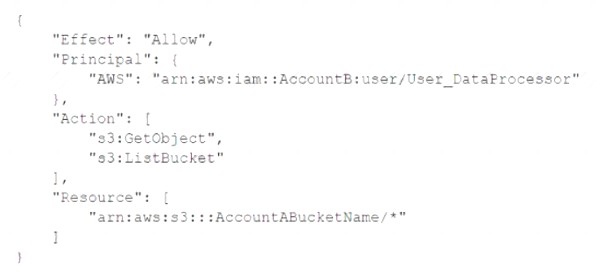

- E. set the S3 bucket policy to the following:

- F. In Account

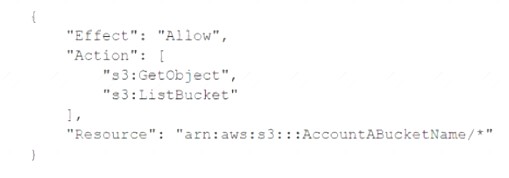

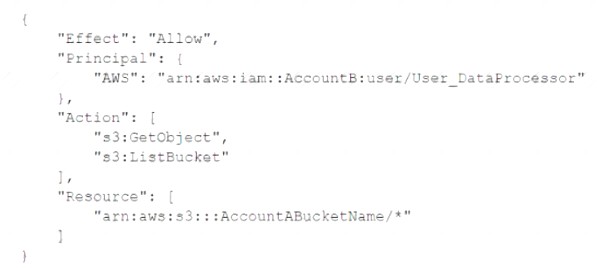

- G. set the permissions of User_DataProcessor to the following:

- H. In Account Bt set the permissions of User_DataProcessor to the following:

Answer: CD

Explanation:

https://aws.amazon.com/premiumsupport/knowledge-center/cross-account-access-s3/

NEW QUESTION 8

A company has an application that runs as a ReplicaSet of multiple pods in an Amazon Elastic Kubernetes Service (Amazon EKS) cluster. The EKS cluster has nodes in multiple Availability Zones. The application generates many small files that must be accessible across all running instances of the application. The company needs to back up the files and retain the backups for 1 year.

Which solution will meet these requirements while providing the FASTEST storage performance?

- A. Create an Amazon Elastic File System (Amazon EFS) file system and a mount target for each subnet that contains nodes in the EKS cluste

- B. Configure the ReplicaSet to mount the file syste

- C. Direct the application to store files in the file syste

- D. Configure AWS Backup to back up and retain copies of the data for 1 year.

- E. Create an Amazon Elastic Block Store (Amazon EBS) volum

- F. Enable the EBS Multi-Attach feature.Configure the ReplicaSet to mount the EBS volum

- G. Direct the application to store files in the EBS volum

- H. Configure AWS Backup to back up and retain copies of the data for 1 year.

- I. Create an Amazon S3 bucke

- J. Configure the ReplicaSet to mount the S3 bucke

- K. Direct the application to store files in the S3 bucke

- L. Configure S3 Versioning to retain copies of the dat

- M. Configure an S3 Lifecycle policy to delete objects after 1 year.

- N. Configure the ReplicaSet to use the storage available on each of the running application pods to store the files locall

- O. Use a third-party tool to back up the EKS cluster for 1 year.

Answer: A

Explanation:

In the past, EBS can be attached only to one ec2 instance but not anymore but there are limitations like - it works only on io1/io2 instance types and many others as described here. https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ebs-volumes-multi.html EFS has shareable storage

In terms of performance, Amazon EFS is optimized for workloads that require high levels of aggregate throughput and IOPS, whereas EBS is optimized for low-latency, random access I/O operations. Amazon EFS is designed to scale throughput and capacity automatically as your storage needs grow, while EBS volumes can be resized on demand.

NEW QUESTION 9

A company consists of two separate business units. Each business unit has its own AWS account within a single organization in AWS Organizations. The business units regularly share sensitive documents with each other. To facilitate sharing, the company created an Amazon S3 bucket in each account and configured two-way replication between the S3 buckets. The S3 buckets have millions of objects.

Recently, a security audit identified that neither S3 bucket has encryption at rest enabled. Company policy requires that all documents must be stored with encryption at rest. The company wants to implement server-side encryption with Amazon S3 managed encryption keys (SSE-S3).

What is the MOST operationally efficient solution that meets these requirements?

- A. Turn on SSE-S3 on both S3 bucket

- B. Use S3 Batch Operations to copy and encrypt the objects in the same location.

- C. Create an AWS Key Management Service (AWS KMS) key in each accoun

- D. Turn on server-side encryption with AWS KMS keys (SSE-KMS) on each S3 bucket by using the corresponding KMS key in that AWS accoun

- E. Encrypt the existing objects by using an S3 copy command in the AWS CLI.

- F. Turn on SSE-S3 on both S3 bucket

- G. Encrypt the existing objects by using an S3 copy command in the AWS CLI.

- H. Create an AWS Key Management Service (AWS KMS) key in each accoun

- I. Turn on server-side encryption with AWS KMS keys (SSE-KMS) on each S3 bucket by using the corresponding KMS key in that AWS accoun

- J. Use S3 Batch Operations to copy the objects into the same location.

Answer: A

Explanation:

"The S3 buckets have millions of objects" If there are million of objects then you should use Batch operations. https://aws.amazon.com/blogs/storage/encrypting-objects-with-amazon-s3-batch-operations/

NEW QUESTION 10

A company deploys a new web application. As pari of the setup, the company configures AWS WAF to log to Amazon S3 through Amazon Kinesis Data Firehose. The company develops an Amazon Athena query that runs once daily to return AWS WAF log data from the previous 24 hours. The volume of daily logs is constant. However, over time, the same query is taking more time to run.

A solutions architect needs to design a solution to prevent the query time from continuing to increase. The solution must minimize operational overhead.

Which solution will meet these requirements?

- A. Create an AWS Lambda function that consolidates each day's AWS WAF logs into one log file.

- B. Reduce the amount of data scanned by configuring AWS WAF to send logs to a different S3 bucket each day.

- C. Update the Kinesis Data Firehose configuration to partition the data in Amazon S3 by date and time.Create external tables for Amazon Redshif

- D. Configure Amazon Redshift Spectrum to query the data source.

- E. Modify the Kinesis Data Firehose configuration and Athena table definition to partition the data by date and tim

- F. Change the Athena query to view the relevant partitions.

Answer: D

Explanation:

The best solution is to modify the Kinesis Data Firehose configuration and Athena table definition to partition the data by date and time. This will reduce the amount of data scanned by Athena and improve the query performance. Changing the Athena query to view the relevant partitions will also help to filter out unnecessary data. This solution requires minimal operational overhead as it does not involve creating additional resources or changing the log format. References: [AWS WAF Developer Guide], [Amazon Kinesis Data Firehose Use Guide], [Amazon Athena User Guide]

NEW QUESTION 11

A company has developed a mobile game. The backend for the game runs on several virtual machines located in an on-premises data center. The business logic is exposed using a REST API with multiple functions. Player session data is stored in central file storage. Backend services use different API keys for throttling and to distinguish between live and test traffic.

The load on the game backend varies throughout the day. During peak hours, the server capacity is not sufficient. There are also latency issues when fetching player session data. Management has asked a solutions architect to present a cloud architecture that can handle the game's varying load and provide low-latency data access. The API model should not be changed.

Which solution meets these requirements?

- A. Implement the REST API using a Network Load Balancer (NLB). Run the business logic on an Amazon EC2 instance behind the NL

- B. Store player session data in Amazon Aurora Serverless.

- C. Implement the REST API using an Application Load Balancer (ALB). Run the business logic in AWS Lambd

- D. Store player session data in Amazon DynamoDB with on-demand capacity.

- E. Implement the REST API using Amazon API Gatewa

- F. Run the business logic in AWS Lambd

- G. Store player session data in Amazon DynamoDB with on- demand capacity.

- H. Implement the REST API using AWS AppSyn

- I. Run the business logic in AWS Lambd

- J. Store player session data in Amazon Aurora Serverless.

Answer: C

NEW QUESTION 12

A company uses AWS Organizations to manage more than 1.000 AWS accounts. The company has created a new developer organization. There are 540 developer member accounts that must be moved to the new developer organization. All accounts are set up with all the required Information so that each account can be operated as a standalone account.

Which combination of steps should a solutions architect take to move all of the developer accounts to the new developer organization? (Select THREE.)

- A. Call the MoveAccount operation in the Organizations API from the old organization's management account to migrate the developer accounts to the new developer organization.

- B. From the management account, remove each developer account from the old organization using the RemoveAccountFromOrganization operation in the Organizations API.

- C. From each developer account, remove the account from the old organization using theRemoveAccountFromOrganization operation in the Organizations API.

- D. Sign in to the new developer organization's management account and create a placeholder member account that acts as a target for the developer account migration.

- E. Call the InviteAccountToOrganization operation in the Organizations API from the new developer organization's management account to send invitations to the developer accounts.

- F. Have each developer sign in to their account and confirm to join the new developer organization.

Answer: BEF

Explanation:

"This operation can be called only from the organization's management account. Member accounts can remove themselves with LeaveOrganization instead." https://docs.aws.amazon.com/organizations/latest/APIReference/API_RemoveAccountFromOrganization.html

NEW QUESTION 13

A company implements a containerized application by using Amazon Elastic Container Service (Amazon ECS) and Amazon API Gateway. The application data is stored in Amazon Aurora databases and Amazon DynamoDB databases The company automates infrastructure provisioning by using AWS CloudFormation The company automates application deployment by using AWS CodePipeline.

A solutions architect needs to implement a disaster recovery (DR) strategy that meets an RPO of 2 hours and an RTO of 4 hours.

Which solution will meet these requirements MOST cost-effectively'?

- A. Set up an Aurora global database and DynamoDB global tables to replicate the databases to a secondary AWS Regio

- B. In the primary Region and in the secondary Region, configure an API Gateway API with a Regional Endpoint Implement Amazon CloudFront with origin failover to route traffic to the secondary Region during a DR scenario

- C. Use AWS Database Migration Service (AWS DMS). Amazon EventBridg

- D. and AWS Lambda to replicate the Aurora databases to a secondary AWS Region Use DynamoDB Streams EventBridge, and Lambda to replicate the DynamoDB databases to the secondary Regio

- E. In the primary Region and in the secondary Region, configure an API Gateway API with a Regional Endpoint Implement Amazon Route 53 failover routing to switch traffic from the primary Region to the secondary Region.

- F. Use AWS Backup to create backups of the Aurora databases and the DynamoDB databases in a secondary AWS Regio

- G. In the primary Region and in the secondary Region, configure an API Gateway API with a Regional endpoin

- H. Implement Amazon Route 53 failover routing to switch traffic from the primary Region to the secondary Region

- I. Set up an Aurora global database and DynamoDB global tables to replicate the databases to a secondary AWS Regio

- J. In the primary Region and in the secondaryRegion, configure an API Gateway API with a Regional endpoint Implement Amazon Route 53 failover routing to switch traffic from the primary Region to the secondary Region

Answer: C

Explanation:

https://aws.amazon.com/blogs/database/cost-effective-disaster-recovery-for-amazon-aurora-databases-using-aws

NEW QUESTION 14

A solutions architect needs to advise a company on how to migrate its on-premises data processing application to the AWS Cloud. Currently, users upload input files through a web portal. The web server then stores the uploaded files on NAS and messages the processing server over a message queue. Each media file can take up to 1 hour to process. The company has determined that the number of media files awaiting processing is significantly higher during business hours, with the number of files rapidly declining after business hours.

What is the MOST cost-effective migration recommendation?

- A. Create a queue using Amazon SQ

- B. Configure the existing web server to publish to the new queue.When there are messages in the queue, invoke an AWS Lambda function to pull requests from the queue and process the file

- C. Store the processed files in an Amazon S3 bucket.

- D. Create a queue using Amazon

- E. Configure the existing web server to publish to the new queu

- F. When there are messages in the queue, create a new Amazon EC2 instance to pull requests from the queue and process the file

- G. Store the processed files in Amazon EF

- H. Shut down the EC2 instance after the task is complete.

- I. Create a queue using Amazon M

- J. Configure the existing web server to publish to the new queue.When there are messages in the queue, invoke an AWS Lambda function to pull requests from the queue and process the file

- K. Store the processed files in Amazon EFS.

- L. Create a queue using Amazon SO

- M. Configure the existing web server to publish to the new queu

- N. Use Amazon EC2 instances in an EC2 Auto Scaling group to pull requests from the queue and process the file

- O. Scale the EC2 instances based on the SOS queue lengt

- P. Store the processed files in an Amazon S3 bucket.

Answer: D

Explanation:

https://aws.amazon.com/blogs/compute/operating-lambda-performance-optimization-part-1/

NEW QUESTION 15

A solutions architect is designing the data storage and retrieval architecture for a new application that a company will be launching soon. The application is designed to ingest millions of small records per minute from devices all around the world. Each record is less than 4 KB in size and needs to be stored in a durable location where it can be retrieved with low latency. The data is ephemeral and the company is required to store the data for 120 days only, after which the data can be deleted.

The solutions architect calculates that, during the course of a year, the storage requirements would be about 10-15 TB.

Which storage strategy is the MOST cost-effective and meets the design requirements?

- A. Design the application to store each incoming record as a single .csv file in an Amazon S3 bucket to allow for indexed retrieva

- B. Configure a lifecycle policy to delete data older than 120 days.

- C. Design the application to store each incoming record in an Amazon DynamoDB table properly configured for the scal

- D. Configure the DynamoOB Time to Live (TTL) feature to delete records older than 120 days.

- E. Design the application to store each incoming record in a single table in an Amazon RDS MySQL databas

- F. Run a nightly cron job that executes a query to delete any records older than 120 days.

- G. Design the application to batch incoming records before writing them to an Amazon S3 bucke

- H. Updatethe metadata for the object to contain the list of records in the batch and use the Amazon S3 metadata search feature to retrieve the dat

- I. Configure a lifecycle policy to delete the data after 120 days.

Answer: B

Explanation:

DynamoDB with TTL, cheaper for sustained throughput of small items + suited for fast retrievals. S3 cheaper for storage only, much higher costs with writes. RDS not designed for this use case.

NEW QUESTION 16

A company uses AWS Organizations to manage a multi-account structure. The company has hundreds of AWS accounts and expects the number of accounts to increase. The company is building a new application that uses Docker images. The company will push the Docker images to Amazon Elastic Container Registry (Amazon ECR). Only accounts that are within the company's organization should have access to the images.

The company has a CI/CD process that runs frequently. The company wants to retain all the tagged images. However, the company wants to retain only the five most recent untagged images.

Which solution will meet these requirements with the LEAST operational overhead?

- A. Create a private repository in Amazon EC

- B. Create a permissions policy for the repository that allows only required ECR operation

- C. Include a condition to allow the ECR operations if the value of the aws:PrincipalOrglD condition key is equal to the ID of the company's organizatio

- D. Add a lifecycle rule to the ECR repository that deletes all untagged images over the count of five.

- E. Create a public repository in Amazon EC

- F. Create an IAM role in the ECR accoun

- G. Set permissions so that any account can assume the role if the value of the aws:PrincipalOrglD condition key is equal to the ID of the company's organizatio

- H. Add a lifecycle rule to the ECR repository that deletes all untagged images over the count of five.

- I. Create a private repository in Amazon EC

- J. Create a permissions policy for the repository that includes only required ECR operation

- K. Include a condition to allow the ECR operations for all account IDs in the organizatio

- L. Schedule a daily Amazon EventBridge rule to invoke an AWS Lambda function that deletes all untagged images over the count of five.

- M. Create a public repository in Amazon EC

- N. Configure Amazon ECR to use an interface VPC endpoint with an endpoint policy that includes the required permissions for images that the company needs to pul

- O. Include a condition to allow the ECR operations for all account IDs in the company's organizatio

- P. Schedule a daily Amazon EventBridge rule to invoke an AWS Lambda function that deletes all untagged images over the count of five.

Answer: A

Explanation:

This option allows the company to use a private repository in Amazon ECR to store and manage its Docker images securely and efficiently1. By creating a permissions policy for the repository that allows only required ECR operations, such as ecr:GetDownloadUrlForLayer, ecr:BatchGetImage, ecr:BatchCheckLayerAvailability, ecr:PutImage, and ecr:InitiateLayerUpload2, the company can restrict access to the repository and prevent unauthorized actions. By including a condition to allow the ECR operations if the value of the aws:PrincipalOrgID condition key is equal to the ID of the company’s organization, the company can ensure that only accounts that are within its organization can access the images3. By adding a lifecycle rule to the ECR repository that deletes all untagged images over the count of five, the company can reduce storage costs and retain only the most recent untagged images4.

References: Amazon ECR private repositories

Amazon ECR private repositories  Amazon ECR repository policies

Amazon ECR repository policies Restricting access to AWS Organizations members

Restricting access to AWS Organizations members  Amazon ECR lifecycle policies

Amazon ECR lifecycle policies

NEW QUESTION 17

A company runs a content management application on a single Windows Amazon EC2 instance in a development environment. The application reads and writes static content to a 2 TB Amazon Elastic Block Store (Amazon EBS) volume that is attached to the instance as the root device. The company plans to deploy this application in production as a highly available and fault-tolerant solution that runs on at least three EC2 instances across multiple Availability Zones.

A solutions architect must design a solution that joins all the instances that run the application to an Active Directory domain. The solution also must implement Windows ACLs to control access to file contents. The application always must maintain exactly the same content on all running instances at any given point in time.

Which solution will meet these requirements with the LEAST management overhead?

- A. Create an Amazon Elastic File System (Amazon EFS) file shar

- B. Create an Auto Scaling group that extends across three Availability Zones and maintains a minimum size of three instance

- C. Implement a user data script to install the application, join the instance to the AD domain, and mount the EFS file share.

- D. Create a new AMI from the current EC2 instance that is runnin

- E. Create an Amazon FSx for Lustre file syste

- F. Create an Auto Scaling group that extends across three Availability Zones and maintains a minimum size of three instance

- G. Implement a user data script to join the instance to the AD domain and mount the FSx for Lustre file system.

- H. Create an Amazon FSx for Windows File Server file syste

- I. Create an Auto Scaling group that extends across three Availability Zones and maintains a minimum size of three instance

- J. Implement a user data script to install the application and mount the FSx for Windows File Server file syste

- K. Perform a seamless domain join to join the instance to the AD domain.

- L. Create a new AMI from the current EC2 instance that is runnin

- M. Create an Amazon Elastic File System (Amazon EFS) file syste

- N. Create an Auto Scaling group that extends across three Availability Zones and maintains a minimum size of three instance

- O. Perform a seamless domain join to join the instance to the AD domain.

Answer: C

Explanation:

https://docs.aws.amazon.com/fsx/latest/WindowsGuide/what-is.html https://docs.aws.amazon.com/directoryservice/latest/admin-guide/ms_ad_join_instance.html

NEW QUESTION 18

A software as a service (SaaS) company uses AWS to host a service that is powered by AWS PrivateLink. The service consists of proprietary software that runs on three Amazon EC2 instances behind a Network Load Balancer (NL B). The instances are in private subnets in multiple Availability Zones in the eu-west-2 Region. All the company's customers are in eu-west-2.

However, the company now acquires a new customer in the us-east-I Region. The company creates a new VPC and new subnets in us-east-I. The company establishes

inter-Region VPC peering between the VPCs in the two Regions.

The company wants to give the new customer access to the SaaS service, but the company does not want to immediately deploy new EC2 resources in us-east-I

Which solution will meet these requirements?

- A. Configure a PrivateLink endpoint service in us-east-I to use the existing NL B that is in eu-west-2. Grant specific AWS accounts access to connect to the SaaS service.

- B. Create an NL B in us-east-I . Create an IP target group that uses the IP addresses of the company's instances in eu-west-2 that host the SaaS servic

- C. Configure a PrivateLink endpoint service that uses the NLB that is in us-east-I . Grant specific AWS accounts access to connect to the SaaS service.

- D. Create an Application Load Balancer (ALB) in front of the EC2 instances in eu-west-2. Create an NLB in us-east-I . Associate the NLB that is in us-east-I with an ALB target group that uses the ALB that is in eu-west-2. Configure a PrivateLink endpoint service that uses the NLB that is in us-east-I . Grant specific AWS accounts access to connect to the SaaS service.

- E. Use AWS Resource Access Manager (AWS RAM) to share the EC2 instances that are in eu-west-2. Inus-east-I , create an NLB and an instance target group that includes the shared EC2 instances from eu-west-2. Configure a PrivateLink endpoint service that uses the NL B that is in us-east-

- F. Grant specific AWS accounts access to connect to the SaaS service.

Answer: B

NEW QUESTION 19

A company is developing a new on-demand video application that is based on microservices. The application will have 5 million users at launch and will have 30 million users after 6 months. The company has deployed the application on Amazon Elastic Container Service (Amazon ECS) on AWS Fargate. The company developed the application by using ECS services that use the HTTPS protocol.

A solutions architect needs to implement updates to the application by using blue/green deployments. The solution must distribute traffic to each ECS service through a load balancer. The application must automatically adjust the number of tasks in response to an Amazon CloudWatch alarm.

Which solution will meet these requirements?

- A. Configure the ECS services to use the blue/green deployment type and a Network Load Balancer.Request increases to the service quota for tasks per service to meet the demand.

- B. Configure the ECS services to use the blue/green deployment type and a Network Load Balancer.Implement an Auto Scaling group for each ECS service by using the Cluster Autoscaler.

- C. Configure the ECS services to use the blue/green deployment type and an Application Load Balancer.Implement an Auto Seating group for each ECS service by using the Cluster Autoscaler.

- D. Configure the ECS services to use the blue/green deployment type and an Application Load Balancer.Implement Service Auto Scaling for each ECS service.

Answer: D

Explanation:

https://repost.aws/knowledge-center/ecs-fargate-service-auto-scaling

NEW QUESTION 20

A company has a data lake in Amazon S3 that needs to be accessed by hundreds of applications across many AWS accounts. The company's information security policy states that the S3 bucket must not be accessed over the public internet and that each application should have the minimum permissions necessary to function.

To meet these requirements, a solutions architect plans to use an S3 access point that is restricted to specific VPCs for each application.

Which combination of steps should the solutions architect take to implement this solution? (Select TWO.)

- A. Create an S3 access point for each application in the AWS account that owns the S3 bucke

- B. Configureeach access point to be accessible only from the application's VP

- C. Update the bucket policy to require access from an access point.

- D. Create an interface endpoint for Amazon S3 in each application's VP

- E. Configure the endpoint policy to allow access to an S3 access poin

- F. Create a VPC gateway attachment for the S3 endpoint.

- G. Create a gateway endpoint for Amazon S3 in each application's VP

- H. Configure the endpoint policy to allow access to an S3 access poin

- I. Specify the route table that is used to access the access point.

- J. Create an S3 access point for each application in each AWS account and attach the access points to the S3 bucke

- K. Configure each access point to be accessible only from the application's VP

- L. Update the bucket policy to require access from an access point.

- M. Create a gateway endpoint for Amazon S3 in the data lake's VP

- N. Attach an endpoint policy to allow access to the S3 bucke

- O. Specify the route table that is used to access the bucket.

Answer: AC

Explanation:

https://joe.blog.freemansoft.com/2020/04/protect-data-in-cloud-with-s3-access.html

NEW QUESTION 21

......

Thanks for reading the newest AWS-Certified-Solutions-Architect-Professional exam dumps! We recommend you to try the PREMIUM Downloadfreepdf.net AWS-Certified-Solutions-Architect-Professional dumps in VCE and PDF here: https://www.downloadfreepdf.net/AWS-Certified-Solutions-Architect-Professional-pdf-download.html (483 Q&As Dumps)

- [2021-New] Amazon AWS-Certified-Developer-Associate Dumps With Update Exam Questions (51-60)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (111-120)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (271-280)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (41-50)

- High Quality AWS-Certified-Security-Specialty Preparation 2021

- [2021-New] Amazon AWS-Certified-Developer-Associate Dumps With Update Exam Questions (21-30)

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (31-40)

- What Practical AWS-Certified-Solutions-Architect-Professional Test Preparation Is

- [2021-New] Amazon AWS-SysOps Dumps With Update Exam Questions (121-130)

- [2021-New] Amazon AWS-Solution-Architect-Associate Dumps With Update Exam Questions (101-110)